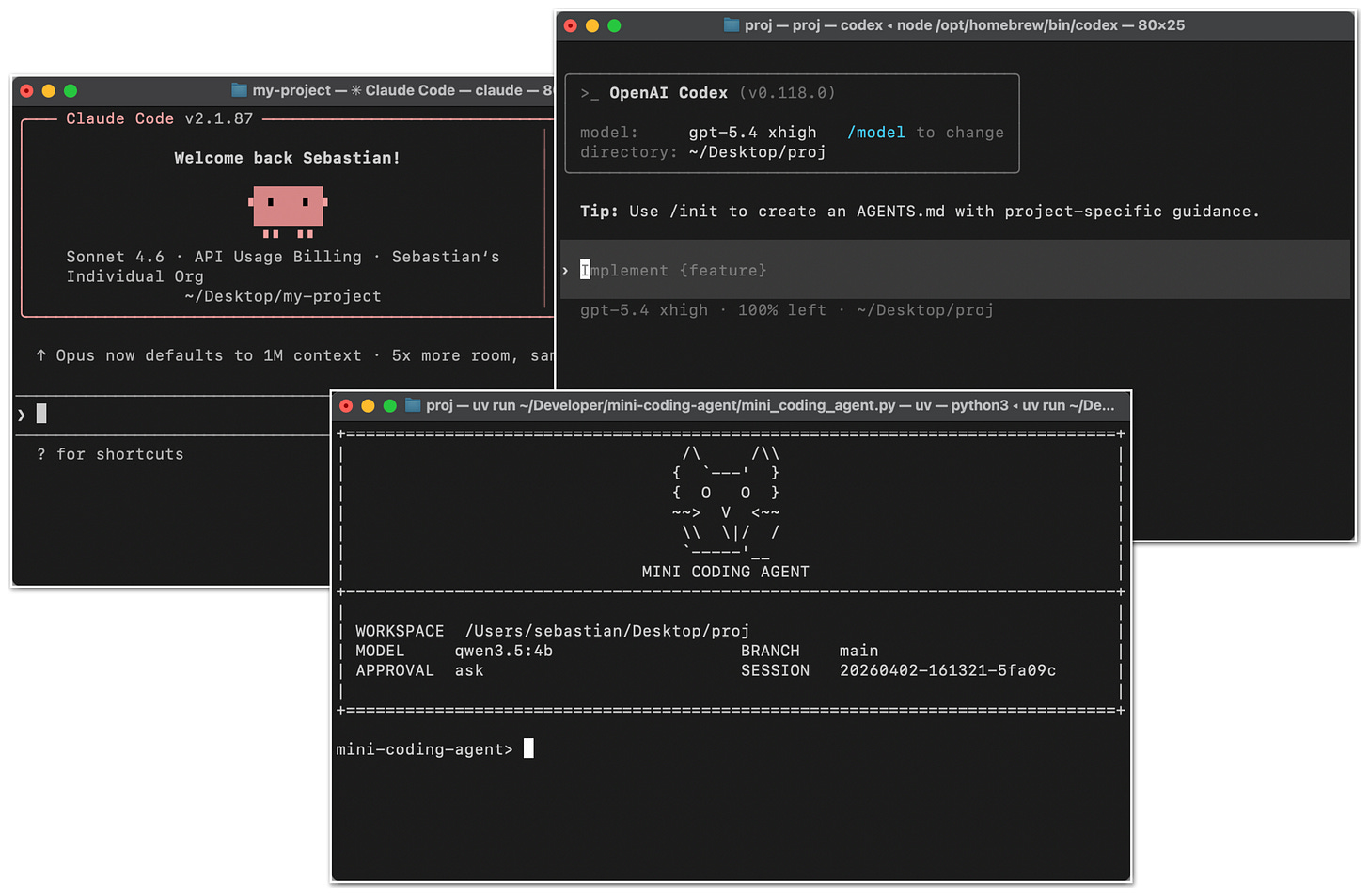

Components of A Coding Agent How coding agents use tools, memory, and repo context to make LLMs work better in practice In this article, I want to cover the overall design of coding agents and agent harnesses: what they are, how they work, and how the different pieces fit together in practice. Readers of my Build a Large Language Model (From Scratch) and Build a Large Reasoning Model (From Scratc…

Ahead of AI

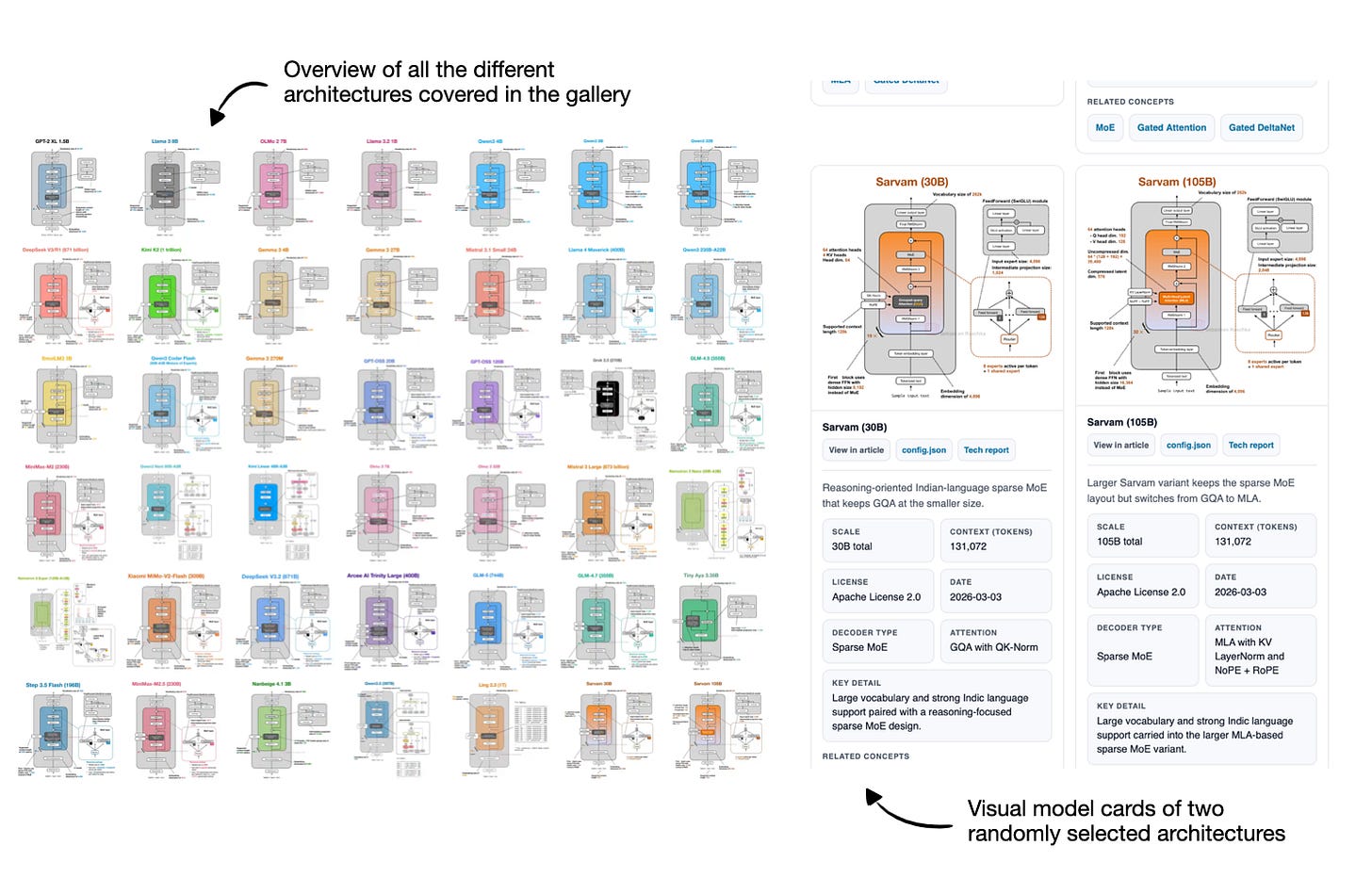

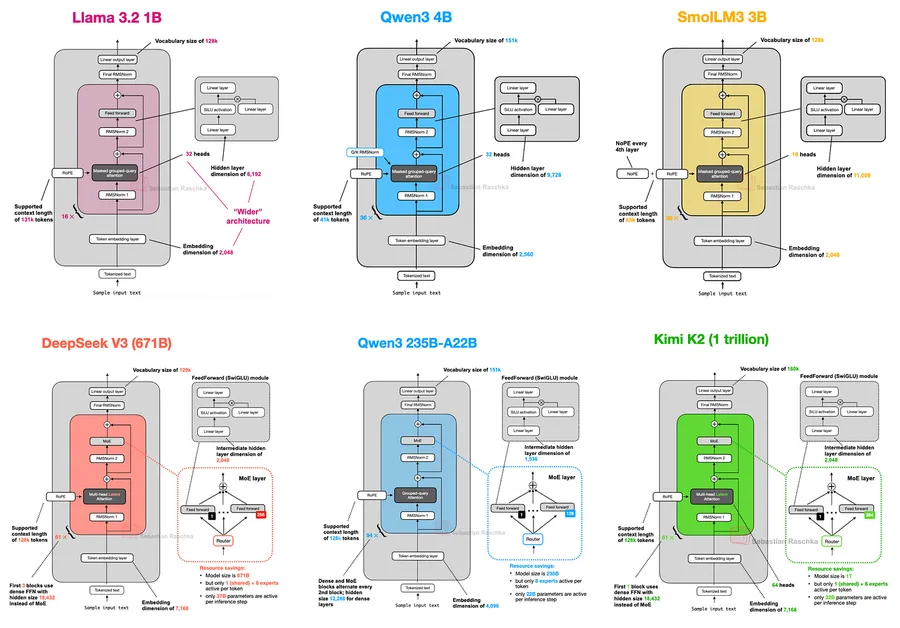

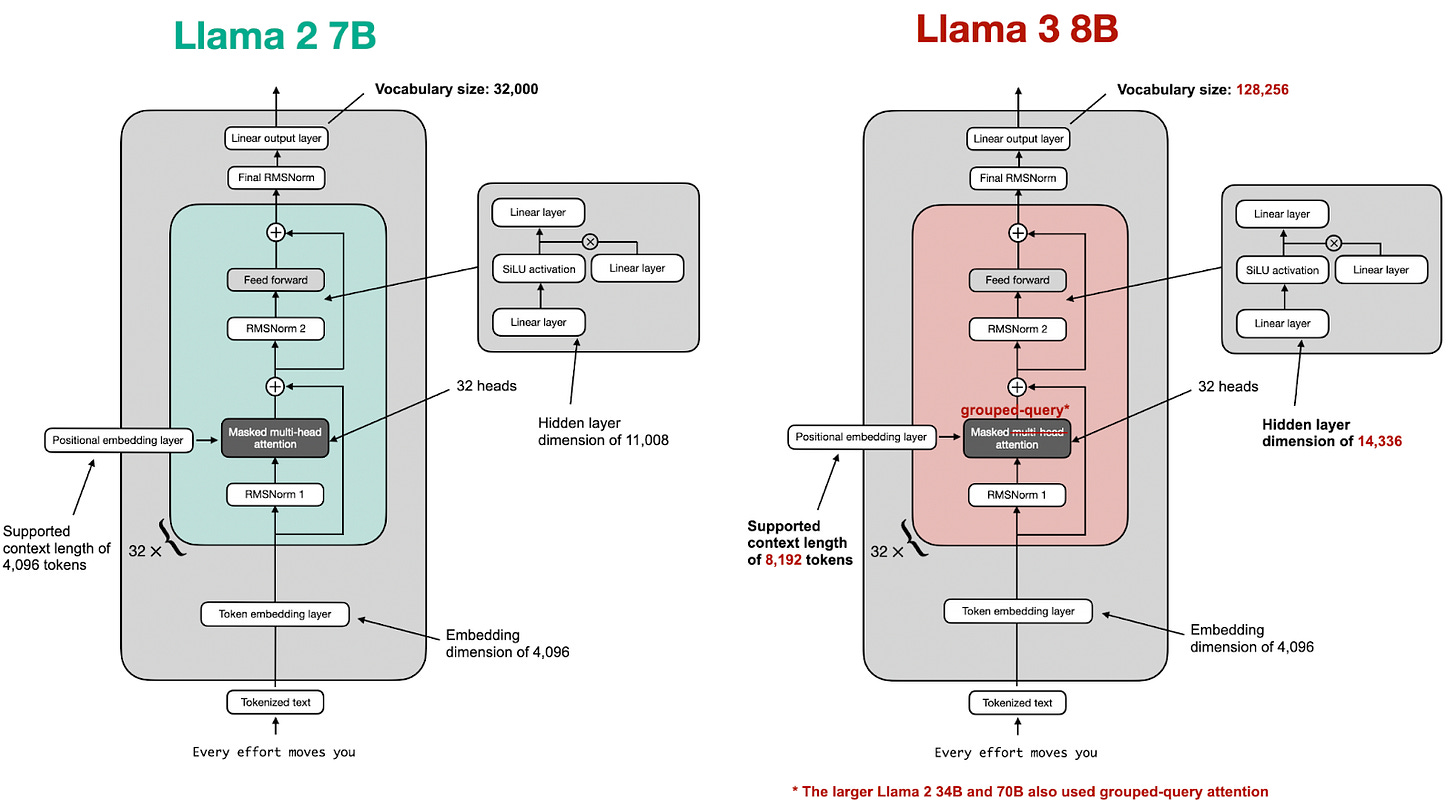

A Visual Guide to Attention Variants in Modern LLMs From MHA and GQA to MLA, sparse attention, and hybrid architectures I had originally planned to write about DeepSeek V4. Since it still hasn’t been released, I used the time to work on something that had been on my list for a while, namely, collecting, organizing, and refining the different LLM architectures I have covered over the past few year…

aimachine-learning

Sebastian Raschka·PhD

2/25/2026

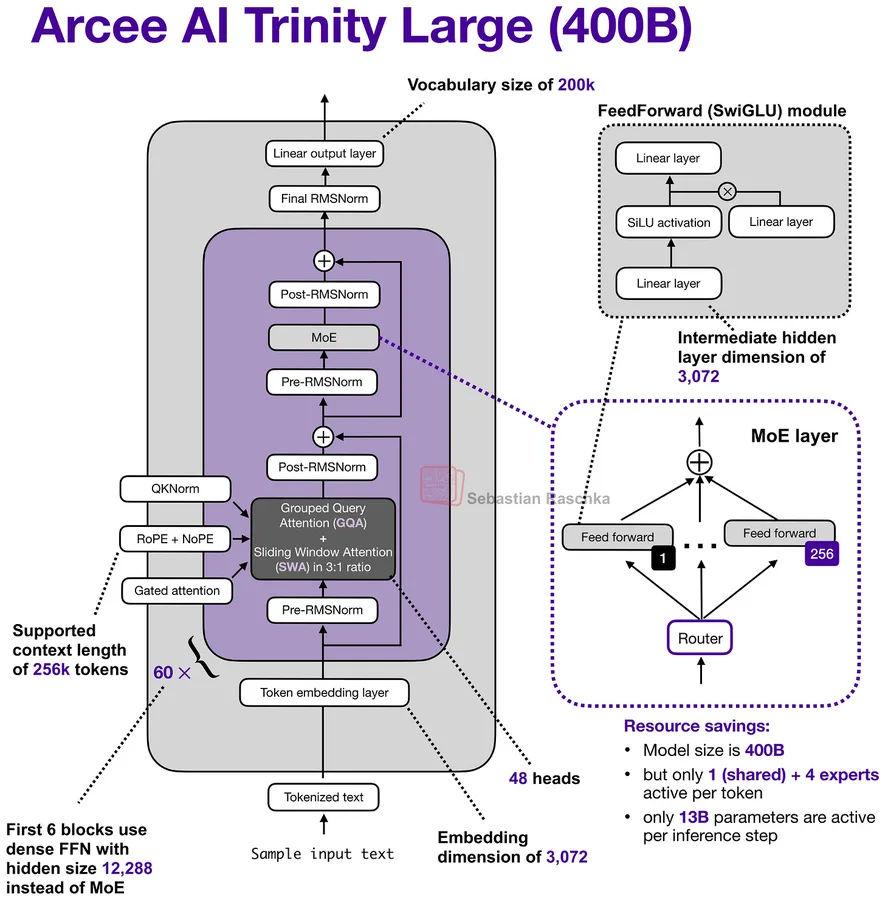

A Dream of Spring for Open-Weight LLMs: 10 Architectures from Jan-Feb 2026 A Round Up And Comparison of 10 Open-Weight LLM Releases in Spring 2026 If you have struggled a bit to keep up with open-weight model releases this month, this article should catch you up on the main themes. In this article, I will walk you through the ten main releases in chronological order, with a focus on the architect…

aimachine-learning

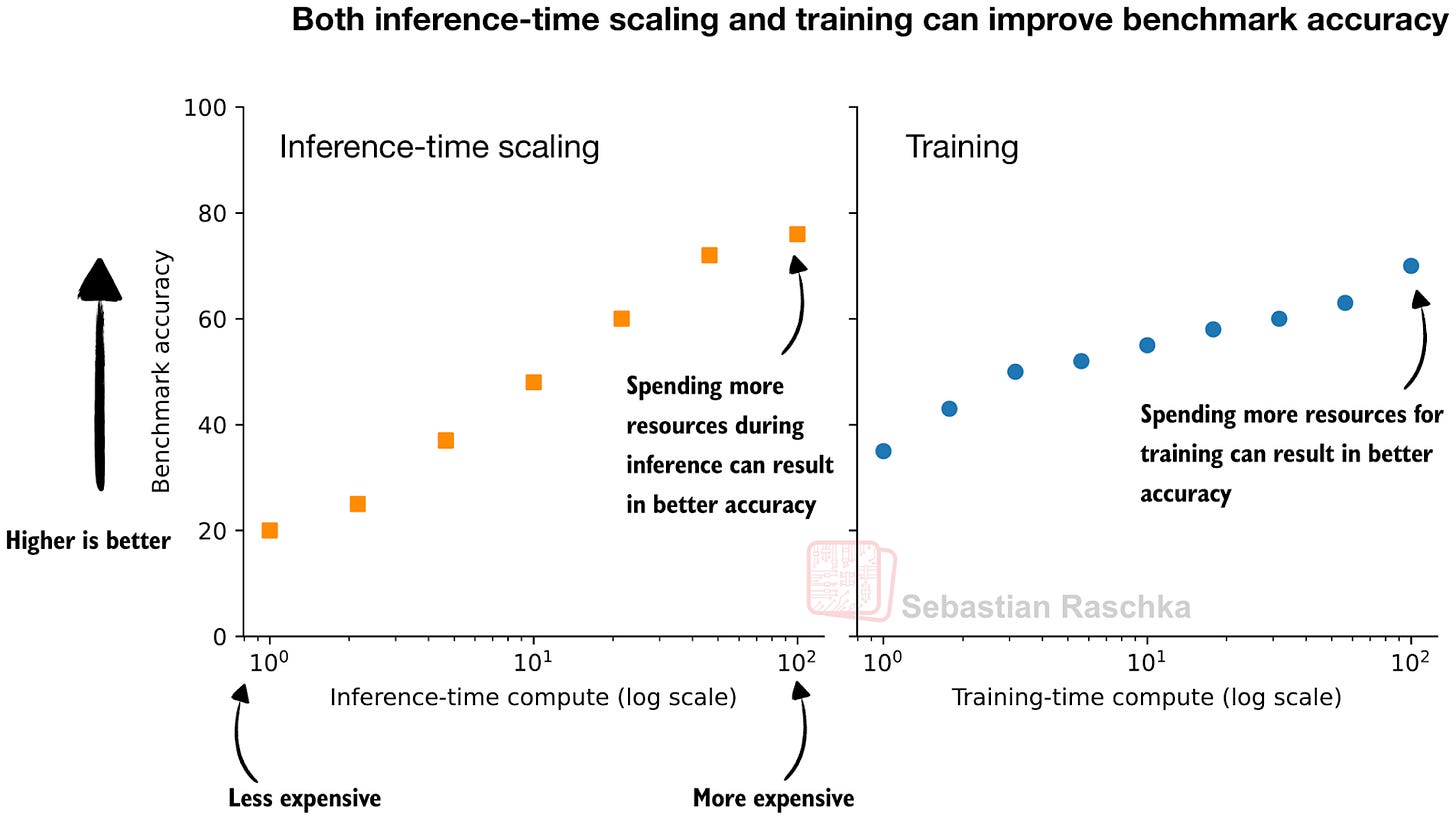

Categories of Inference-Time Scaling for Improved LLM Reasoning And an Overview of Recent Inference-Scaling Papers (Including Recursive Language Models) Inference scaling has become one of the most effective ways to improve answer quality and accuracy in deployed LLMs. The idea is straightforward. If we are willing to spend a bit more compute, and more time at inference time (when we use the mode…

aimachine-learning

The State Of LLMs 2025: Progress, Problems, and Predictions As 2025 comes to a close, I want to look back at some of the year’s most important developments in large language models, reflect on the limitations and open problems that remain, and share a few thoughts on what might come next. As I tend to say every year, 2025 was a very eventful year for LLMs and AI, and this year, there was no sign …

aimachine-learning

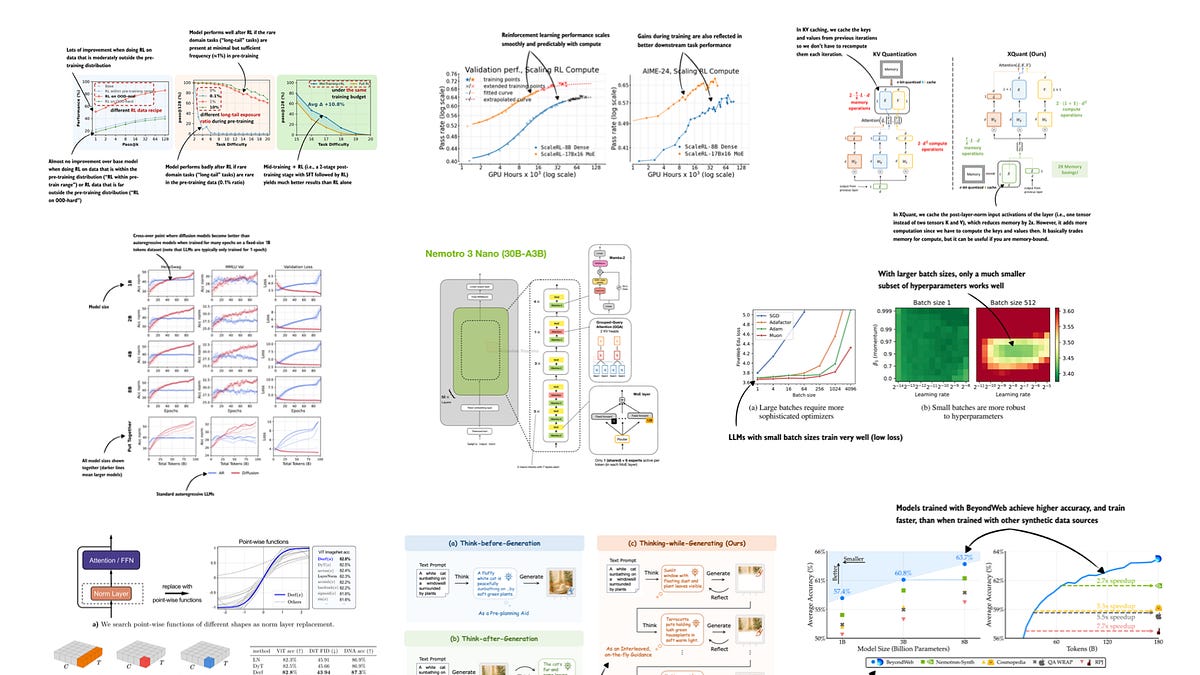

LLM Research Papers: The 2025 List (July to December) In June, I shared a bonus article with my curated and bookmarked research paper lists to the paid subscribers who make this Substack possible. In a similar vein, as a thank-you to all the kind supporters, I have prepared a list below of the interesting research articles I bookmarked and categorized from July to December 2025. I skimmed over th…

Sebastian Raschka·PhD

12/3/2025

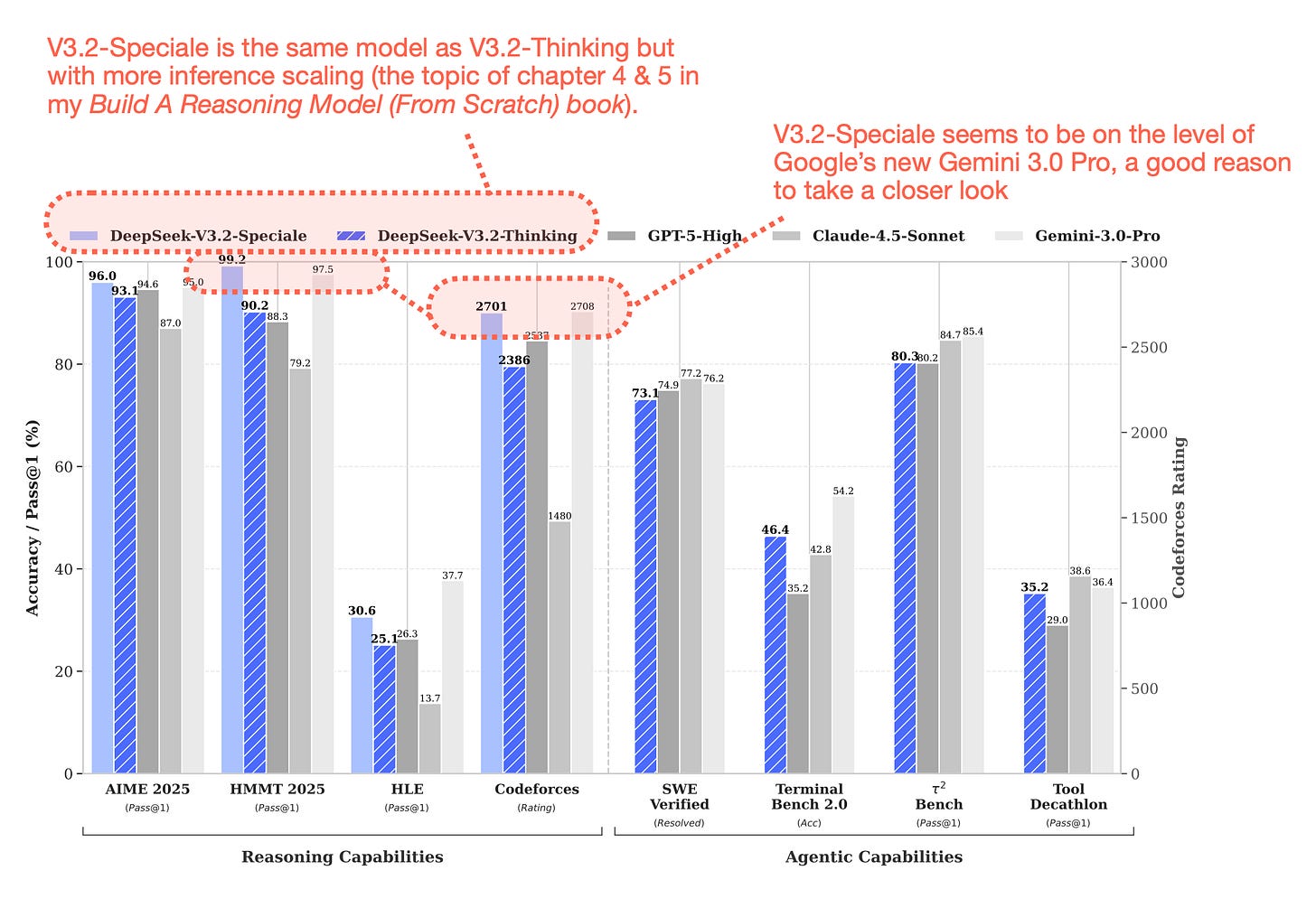

From DeepSeek V3 to V3.2: Architecture, Sparse Attention, and RL Updates Understanding How DeepSeek's Flagship Open-Weight Models Evolved Last updated: January 1st, 2026 Similar to DeepSeek V3, the team released their new flagship model over a major US holiday weekend. Given DeepSeek V3.2’s really good performance (on GPT-5 and Gemini 3.0 Pro) level, and the fact that it’s also available as an op…

aimachine-learningreinforcement-learning

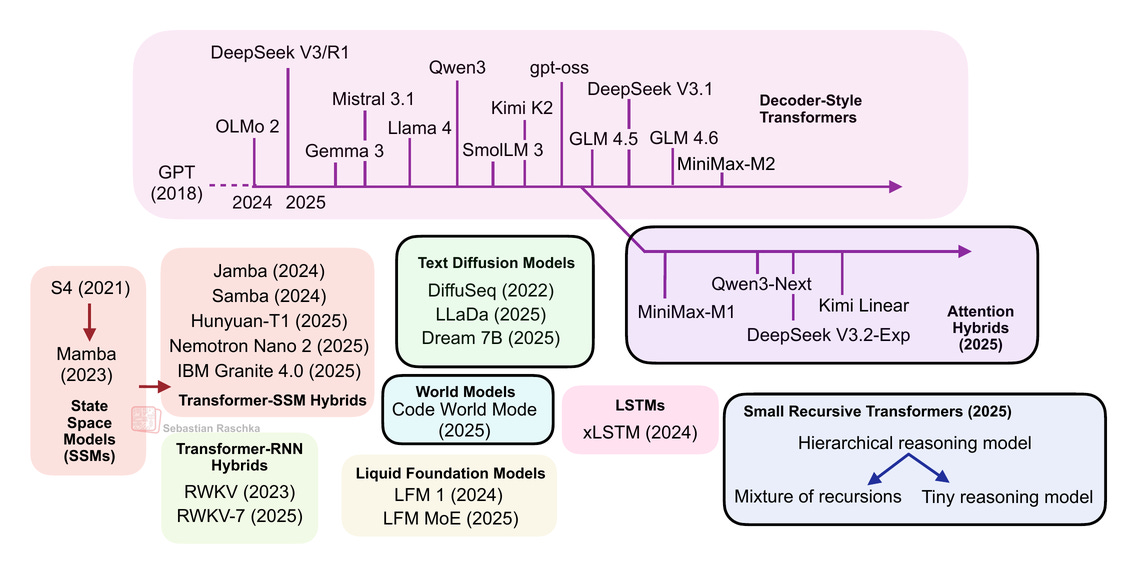

Beyond Standard LLMs Linear Attention Hybrids, Text Diffusion, Code World Models, and Small Recursive Transformers From DeepSeek R1 to MiniMax-M2, the largest and most capable open-weight LLMs today remain autoregressive decoder-style transformers, which are built on flavors of the original multi-head attention mechanism. However, we have also seen alternatives to standard LLMs popping up in rece…

aimachine-learning

Understanding the 4 Main Approaches to LLM Evaluation (From Scratch) Multiple-Choice Benchmarks, Verifiers, Leaderboards, and LLM Judges with Code Examples How do we actually evaluate LLMs? It’s a simple question, but one that tends to open up a much bigger discussion. When advising or collaborating on projects, one of the things I get asked most often is how to choose between different models an…

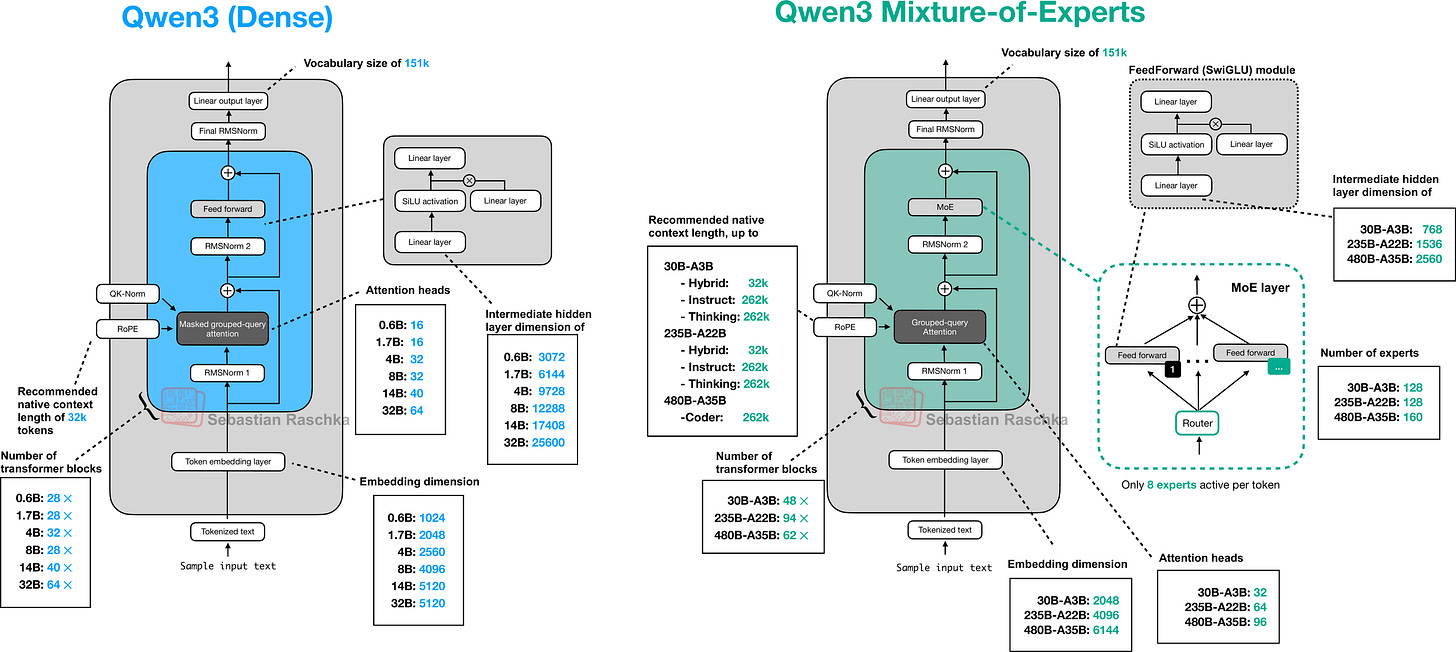

Understanding and Implementing Qwen3 From Scratch A Detailed Look at One of the Leading Open-Source LLMs Previously, I compared the most notable open-weight architectures of 2025 in The Big LLM Architecture Comparison. Then, I zoomed in and discussed the various architecture components in From GPT-2 to gpt-oss: Analyzing the Architectural Advances on a conceptual level. Since all good things come…

aimachine-learningnlp

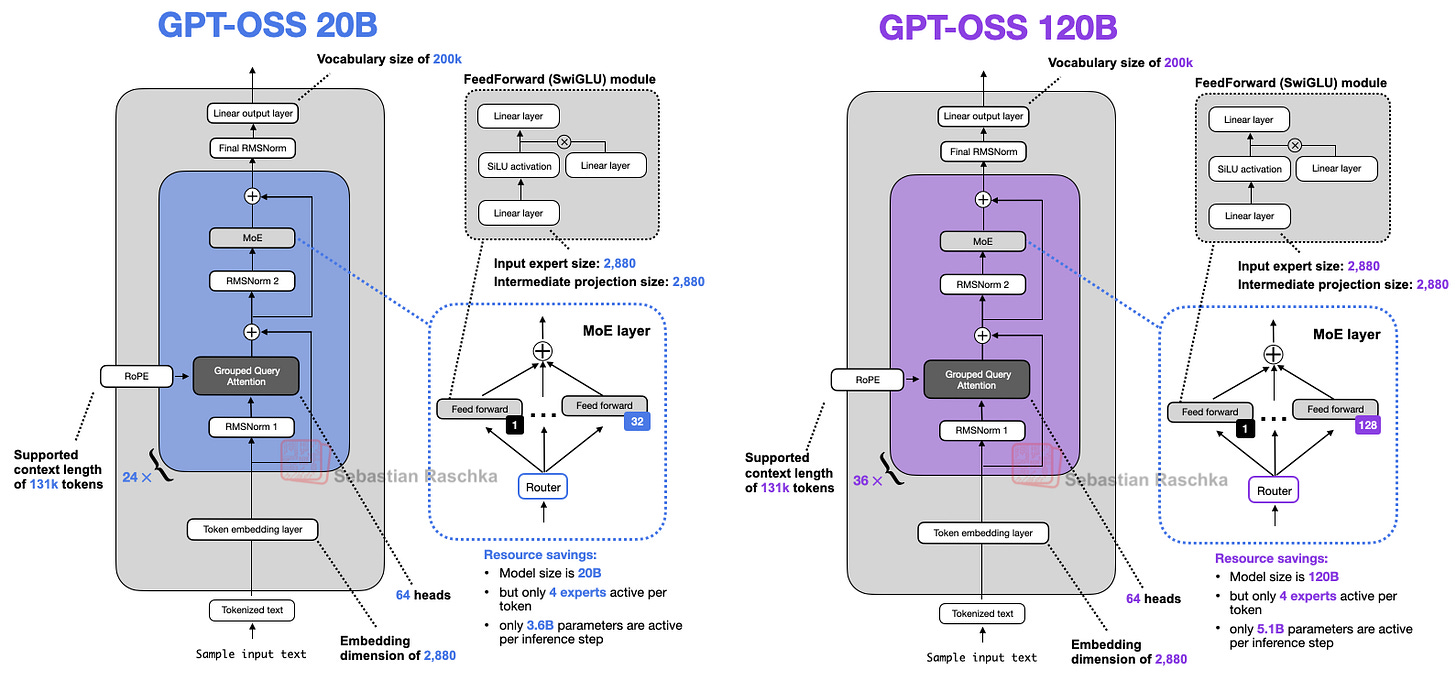

From GPT-2 to gpt-oss: Analyzing the Architectural Advances And How They Stack Up Against Qwen3 OpenAI just released their new open-weight LLMs this week: gpt-oss-120b and gpt-oss-20b, their first open-weight models since GPT-2 in 2019. And yes, thanks to some clever optimizations, they can run locally (but more about this later). This is the first time since GPT-2 that OpenAI has shared a large,…

aimachine-learning

The Big LLM Architecture Comparison From DeepSeek V3 to GLM-5: A Look At Modern LLM Architecture Design Last updated: Apr 2, 2026 (added Gemma 4 in section 23) It has been seven years since the original GPT architecture was developed. At first glance, looking back at GPT-2 (2019) and forward to DeepSeek V3 and Llama 4 (2024-2025), one might be surprised at how structurally similar these models st…

aimachine-learning

LLM Research Papers: The 2025 List (January to June) A topic-organized collection of 200+ LLM research papers from 2025 As some of you know, I keep a running list of research papers I (want to) read and reference. About six months ago, I shared my 2024 list, which many readers found useful. So, I was thinking about doing this again. However, this time, I am incorporating that one piece of feedbac…

aimachine-learning

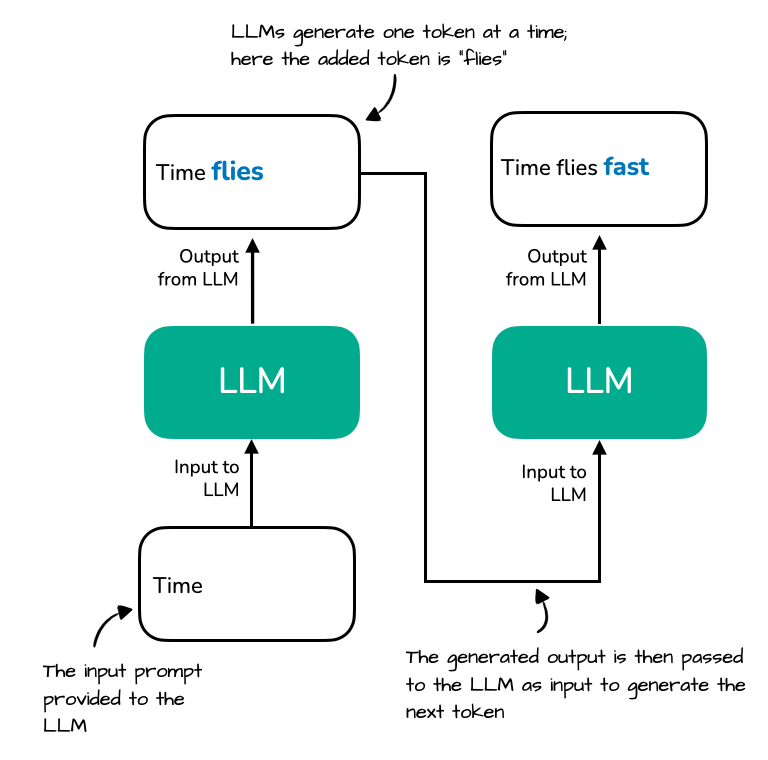

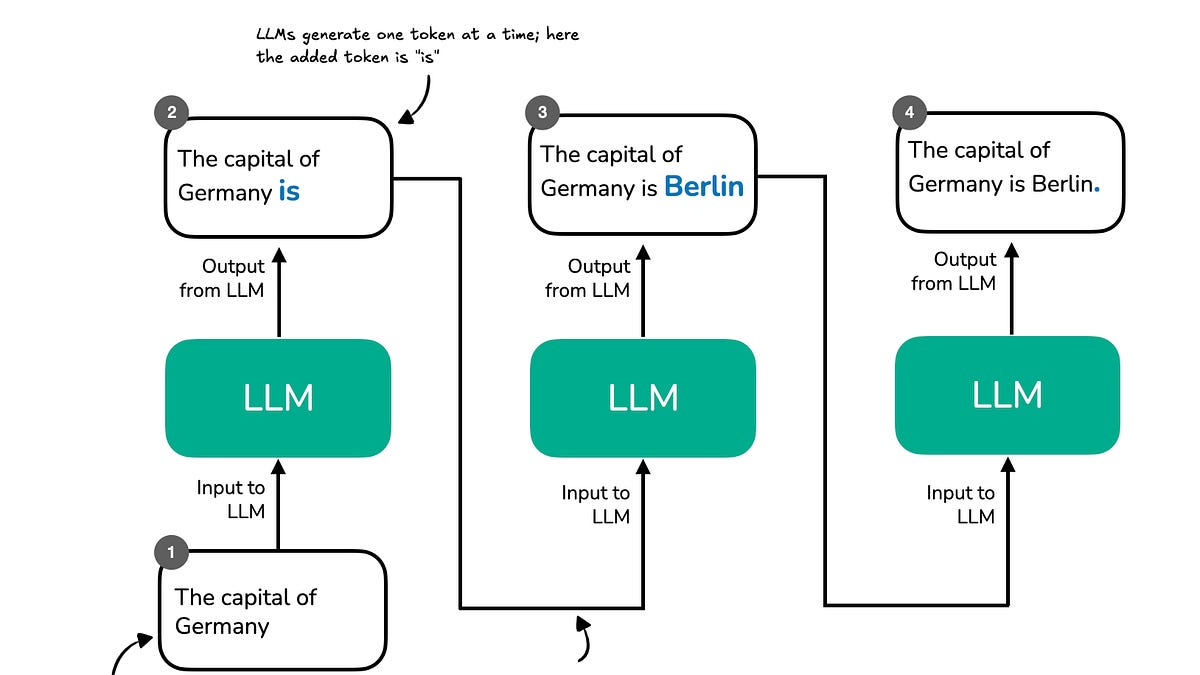

Understanding and Coding the KV Cache in LLMs from Scratch KV caches are one of the most critical techniques for efficient inference in LLMs in production. KV caches are an important component for compute-efficient LLM inference in production. This article explains how they work conceptually and in code with a from-scratch, human-readable implementation. It's been a while since I shared a technic…

aimachine-learning

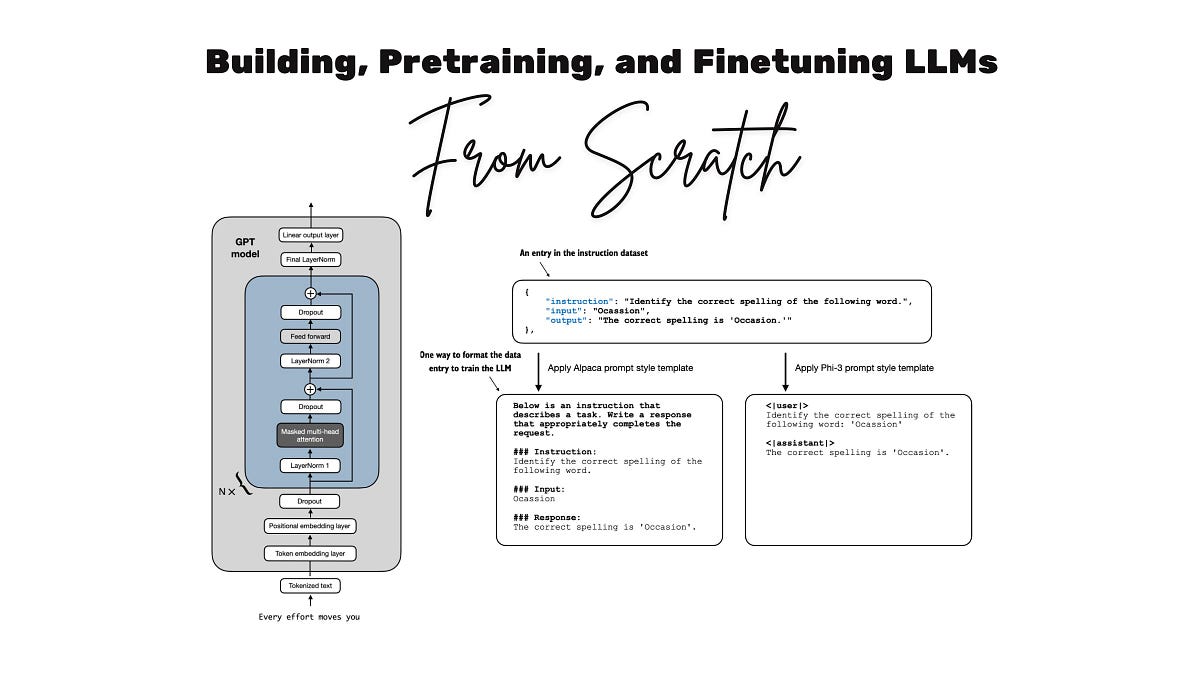

Coding LLMs from the Ground Up: A Complete Course I wrote a lot about reasoning models in recent months (4 articles in a row)! Next to everything "agentic," reasoning is one of the biggest LLM topics of 2025. This month, however, I wanted to share more fundamental or "foundational" content with you on how to code LLMs, which is one of the best ways to understand how LLMs work. Why? Many people re…

aicomputer-sciencemachine-learningprogramming-languages

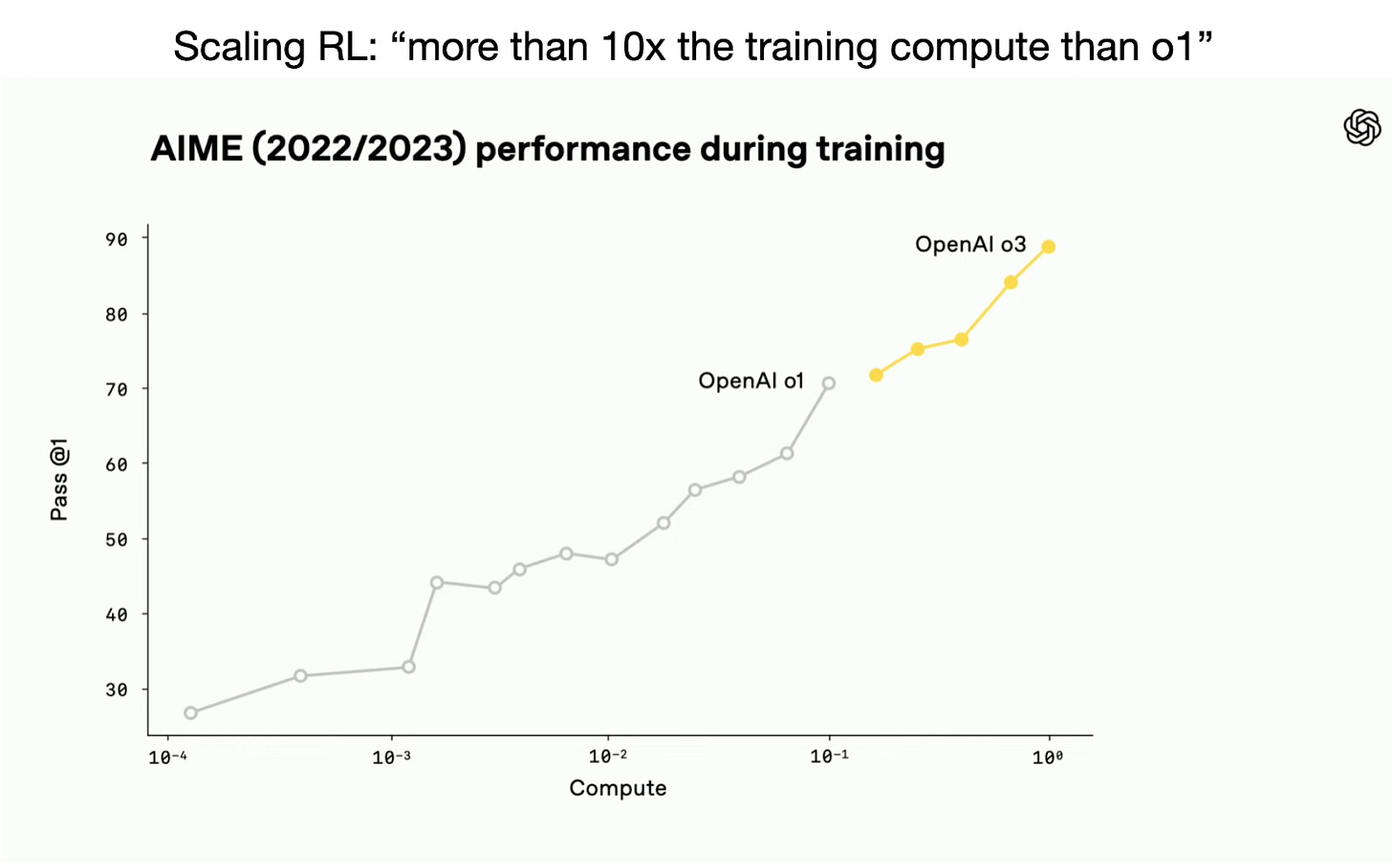

The State of Reinforcement Learning for LLM Reasoning Understanding GRPO and New Insights from Reasoning Model Papers A lot has happened this month, especially with the releases of new flagship models like GPT-4.5 and Llama 4. But you might have noticed that reactions to these releases were relatively muted. Why? One reason could be that GPT-4.5 and Llama 4 remain conventional models, which means…

aireinforcement-learning

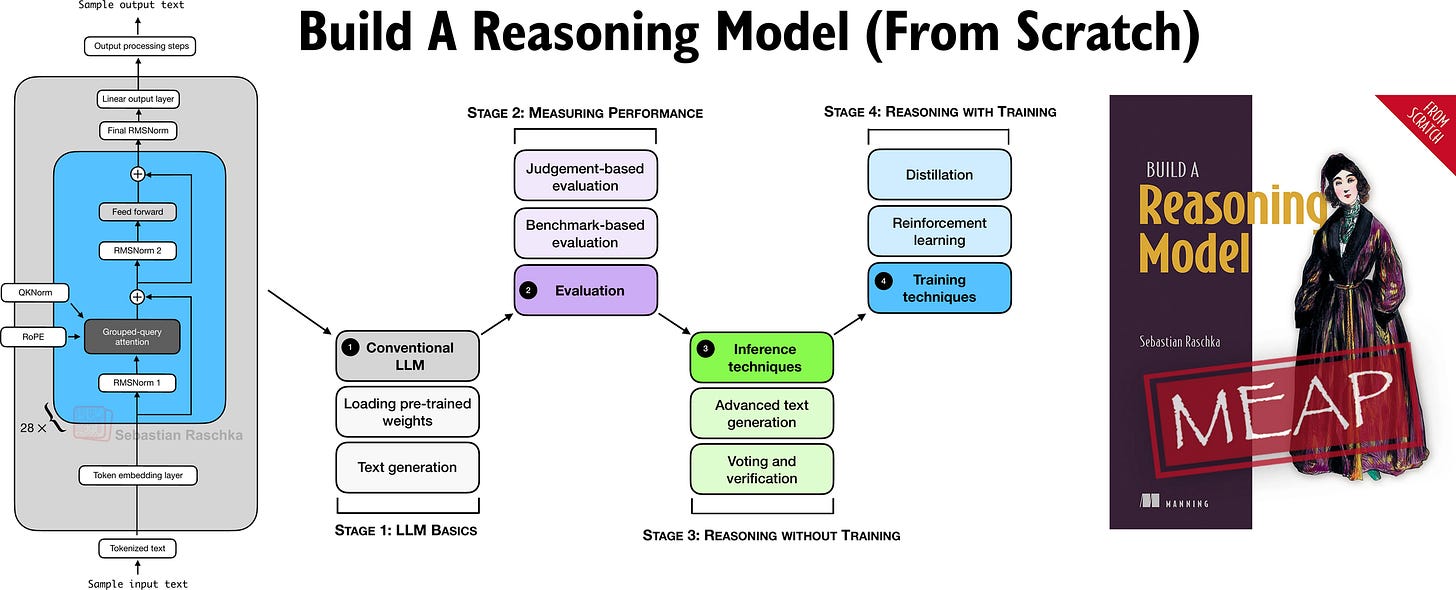

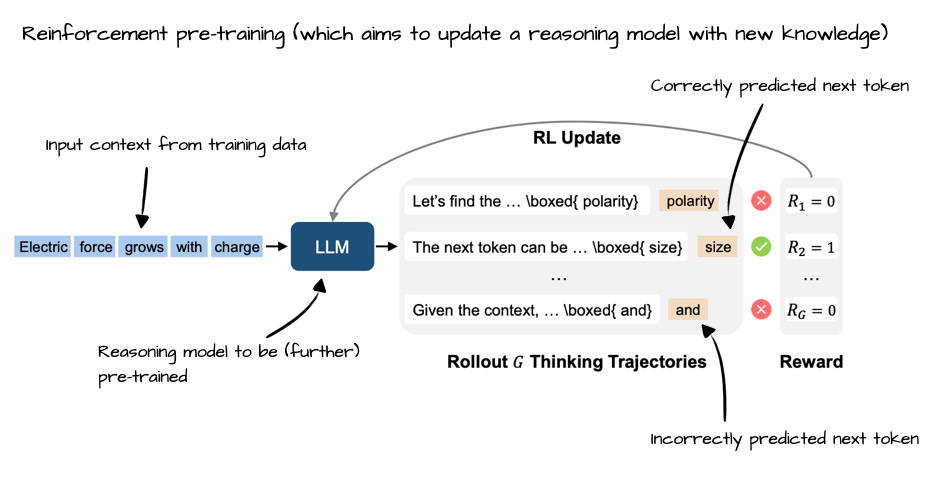

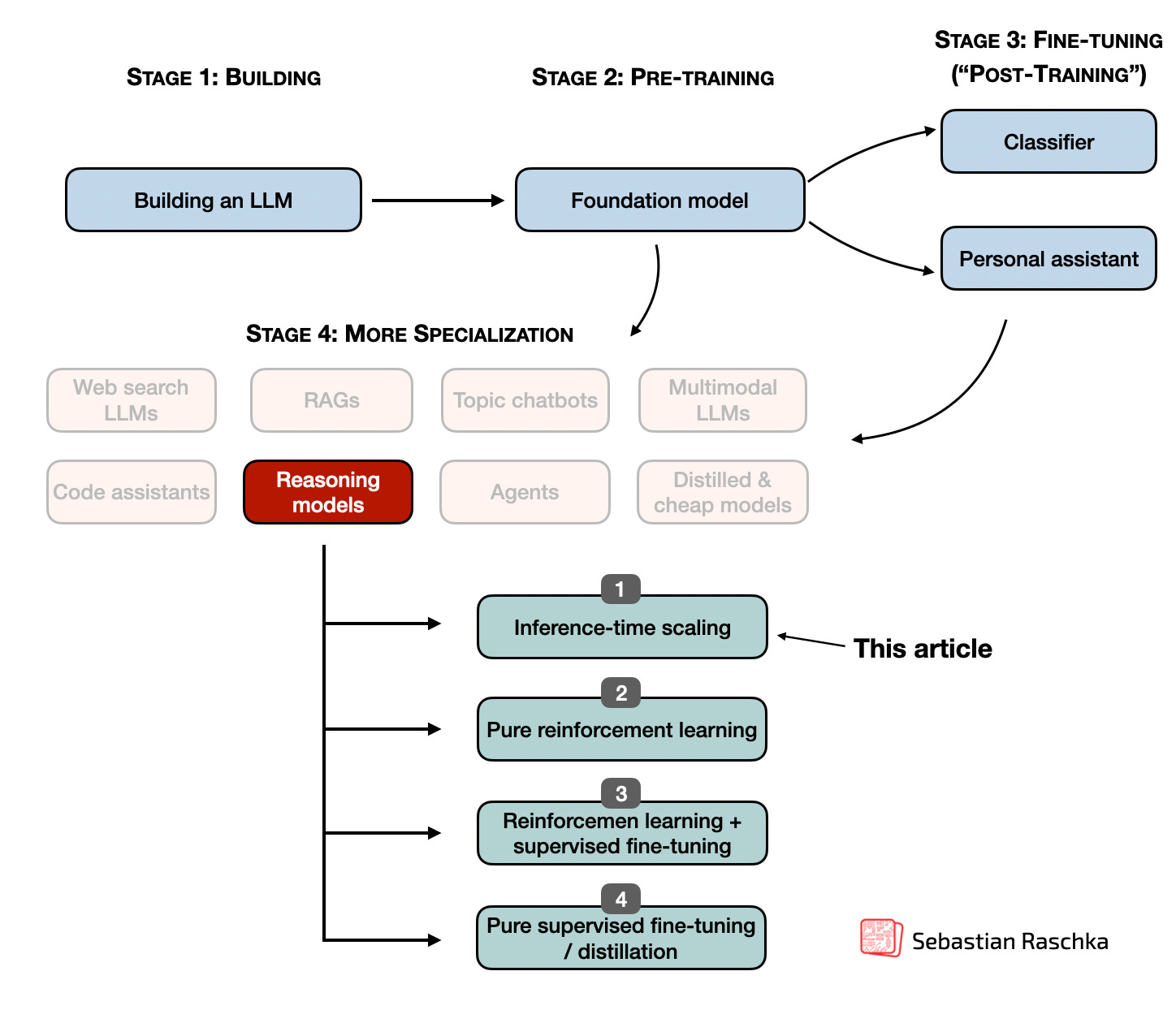

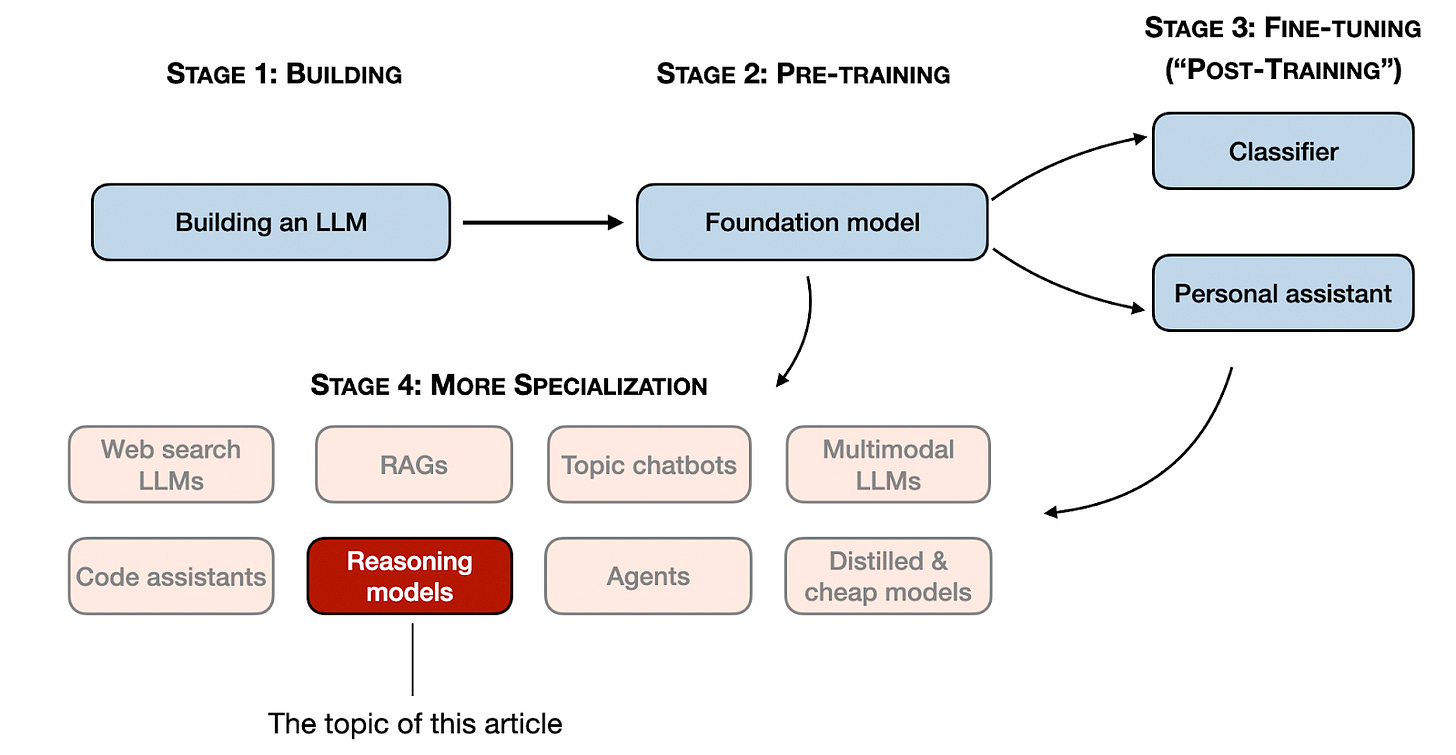

First Look at Reasoning From Scratch: Chapter 1 An introduction to reasoning in today's LLMs Hi everyone, As you know, I've been writing a lot lately about the latest research on reasoning in LLMs. Before my next research-focused blog post, I wanted to offer something special to my paid subscribers as a thank-you for your ongoing support. So, I've started writing a new book on how reasoning works…

ainlp

The State of LLM Reasoning Model Inference Inference-Time Compute Scaling Methods to Improve Reasoning Models Improving the reasoning abilities of large language models (LLMs) has become one of the hottest topics in 2025, and for good reason. Stronger reasoning skills allow LLMs to tackle more complex problems, making them more capable across a wide range of tasks users care about. In the last fe…

aimachine-learning

Understanding Reasoning LLMs Methods and Strategies for Building and Refining Reasoning Models This article describes the four main approaches to building reasoning models, or how we can enhance LLMs with reasoning capabilities. I hope this provides valuable insights and helps you navigate the rapidly evolving literature and hype surrounding this topic. In 2024, the LLM field saw increasing speci…

aimachine-learning

Noteworthy AI Research Papers of 2024 (Part Two) Six influential AI papers from July to December I hope your 2025 is off to a great start! To kick off the year, I've finally been able to complete the draft and second part of this AI Research Highlights of 2024 article. It covers a variety of relevant topics, from mixture-of-experts models to new LLM scaling laws for precision. Note that this arti…

aimachine-learningnlp

research.io

research.ioSign up to keep scrolling

Create your feed subscriptions, save articles, keep scrolling.

Already have an account?