ByteByteGo Newsletter

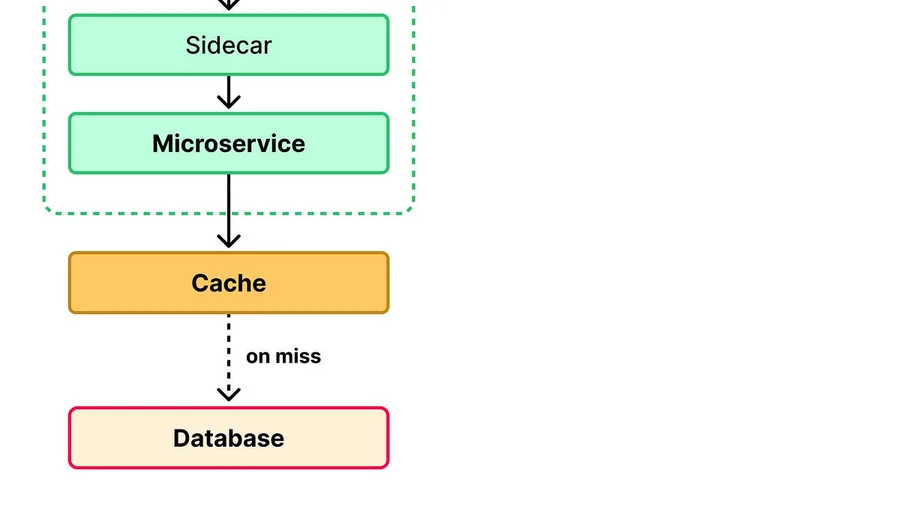

The Path of a Request: A Tour of Modern Web Architecture A web page loads in under a second. In that second, a single user request may have passed through roughly ten distinct systems on its way to and from the database. The page feels fast because of how those systems are arranged. Each layer absorbs as much traffic as it can before passing the rest along. Taken together, the layers form a funne…

The hardest part of data analysis isn’t writing SQL. It’s finding the right tables to use in the first place and understanding semantically how to use data.

This piece is a working guide for engineers who want to land on the productive side of that split.

In this article, we will learn how they built this flywheel and the key takeaways.

In this article, we will look at the most significant failure mode patterns in distributed systems and the standard approaches to deal with each of them.

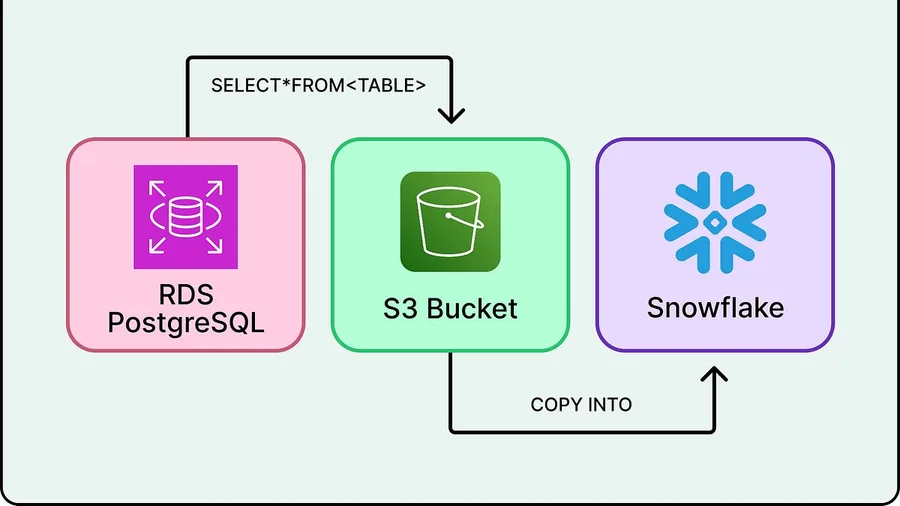

In this article, we will look at how Airtable’s data infrastructure team built its architecture, the challenges they faced, the tradeoffs they accepted, and why the choices they made only make sense once their data is properly understood.

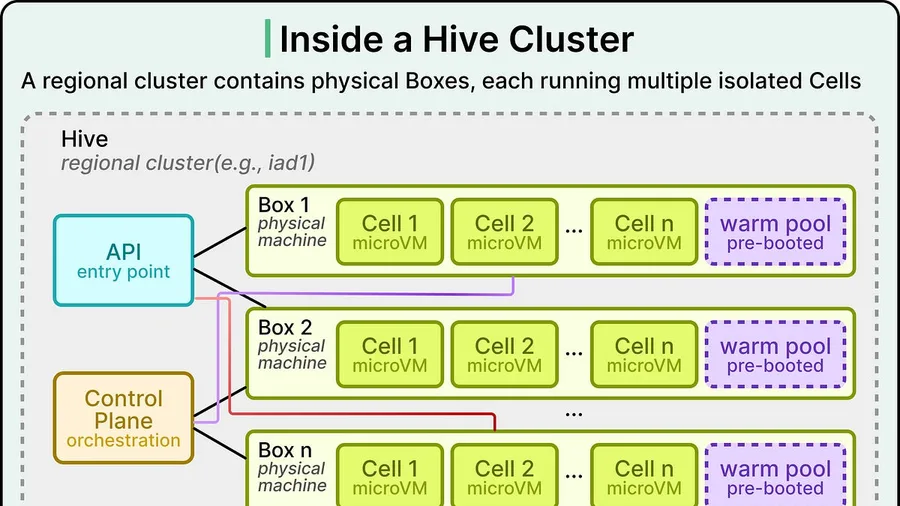

In this article, we examine the constraints Vercel faced, the choices they made in response, and the optimizations that produced the speedup.

In this article, we will look at how the CockroachDB engineering team built this index and the challenges they faced.

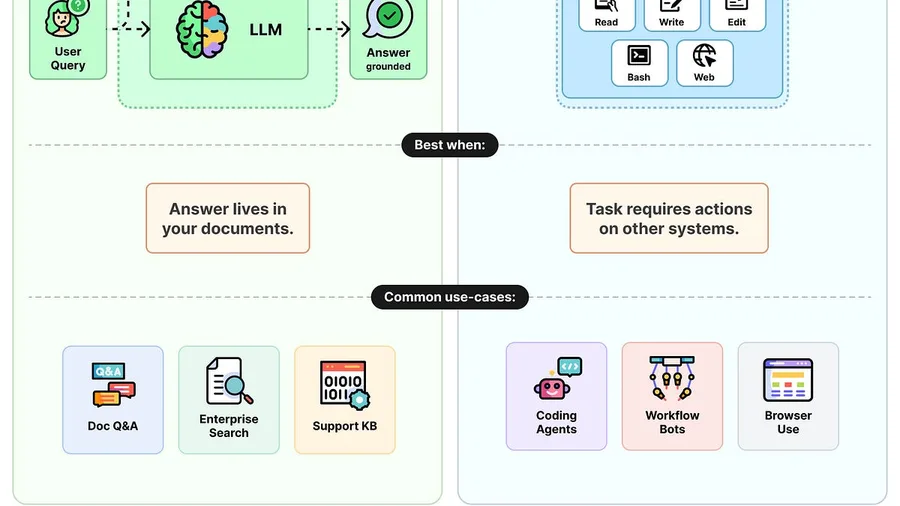

Ask an LLM about your company's data and it will guess. The two patterns that fix this are RAG and agents, and they solve different problems.

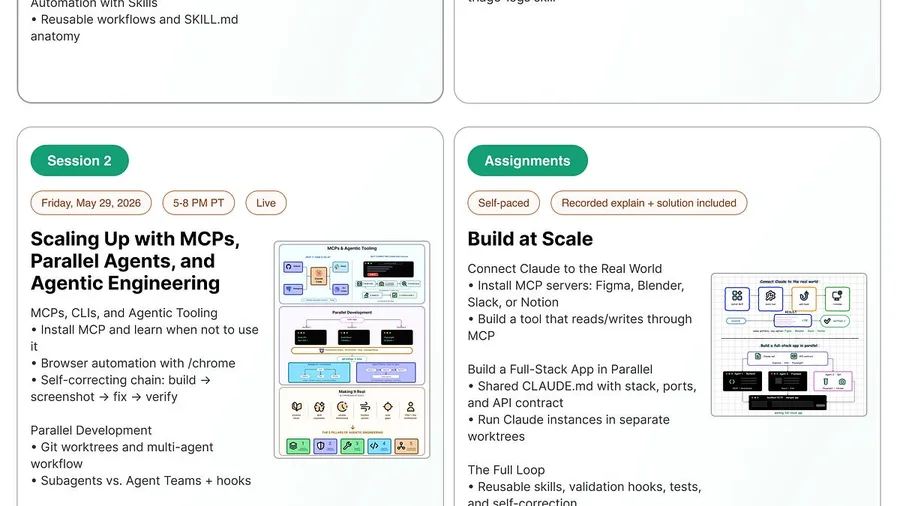

Build with Claude Code: New Cohort Launch We’re launching a new 2 day intensive, cohort based course called Build with Claude Code, taught by John Kim, who has trained hundreds of engineers at Meta to use Claude Code in real production workflows. The course starts soon on May 28. A few things you’ll learn: The agentic loop, context engineering, and memory layers that make Claude Code useful for r…

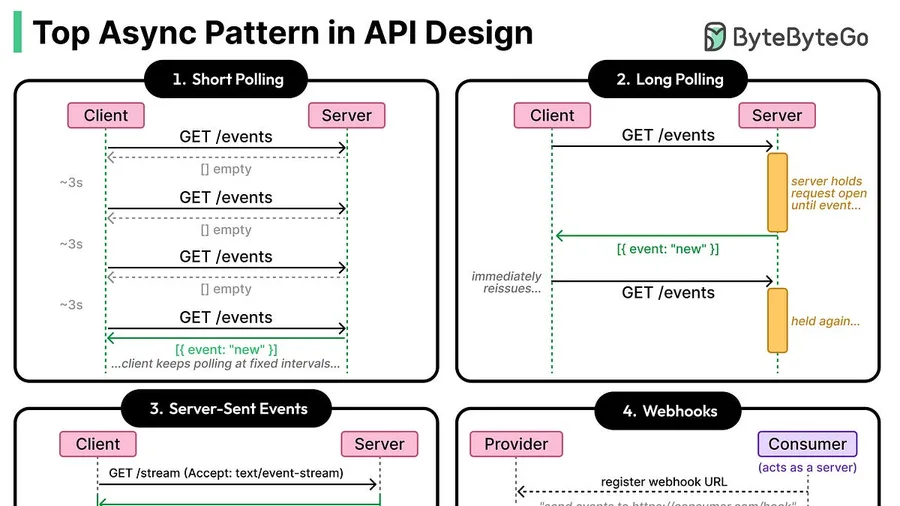

In this article, we will look at each of these patterns in detail, along with their advantages.

In this article, we will understand how Netflix built this system and the challenges it faced.

For Snap, machine learning is closer to the product itself than a feature on top of it.

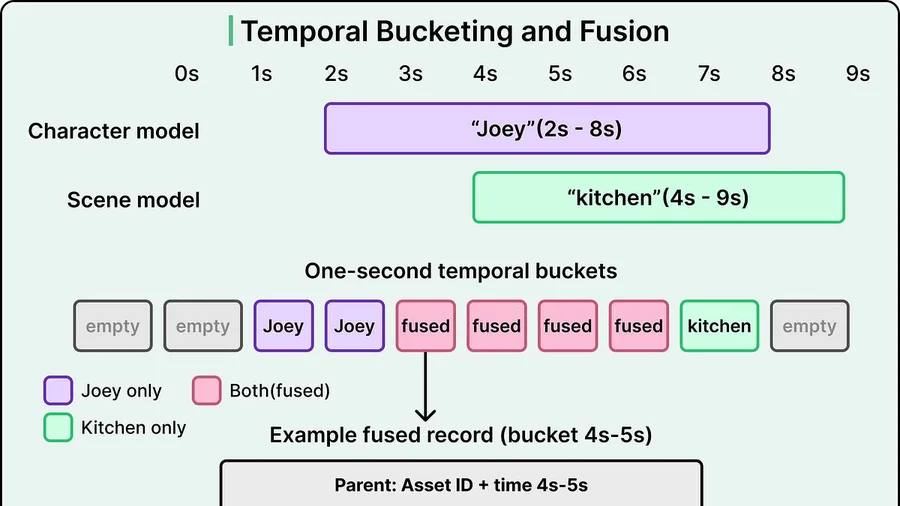

Grab’s data engineering team had a problem that looks familiar to anyone who’s maintained shared infrastructure.

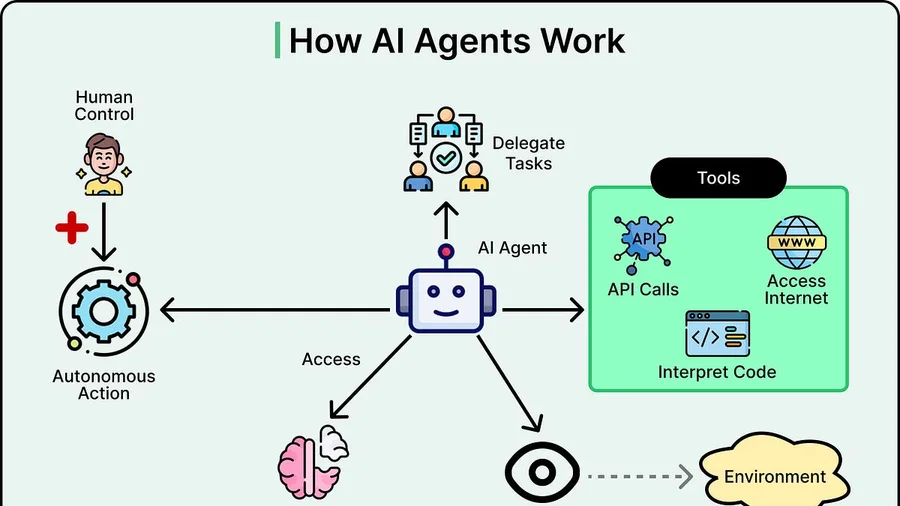

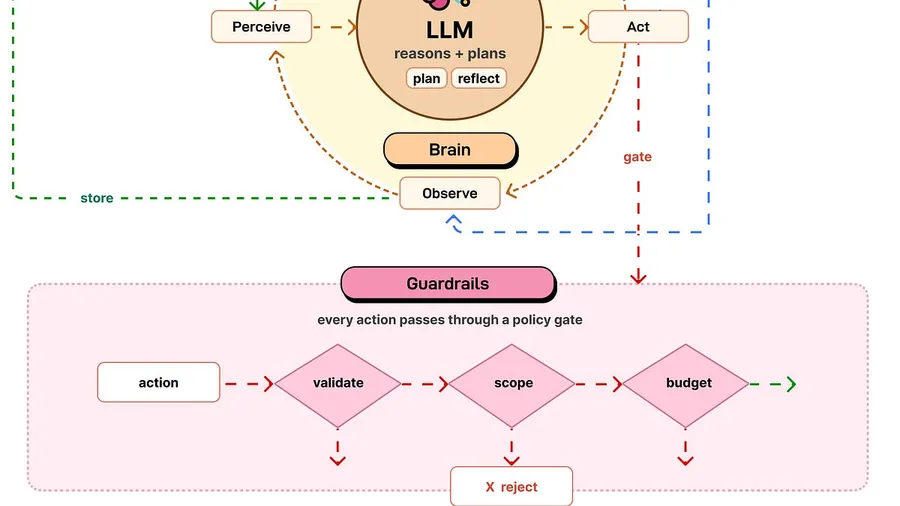

EP215: The Anatomy of an AI Agent On June 10, GitLab Transcend streams live from London with an agenda built for practitioners like you. You can expect an agenda that’s full of keyboard moments with live demos of Duo Agent Platform, agentic AI use cases from your peers, and The Developer Show hosted live by Senior Developer Advocate, Colleen Lake. GitLab Transcend streams live from London on June…

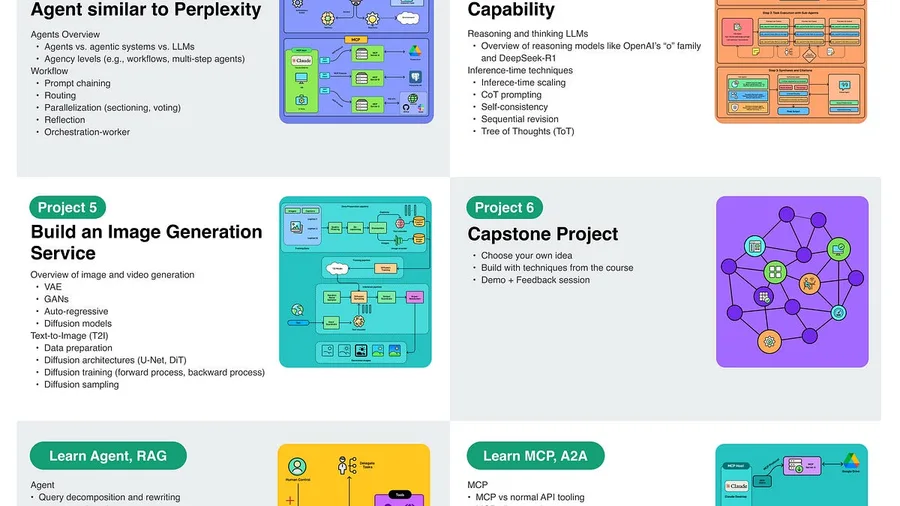

Our 6th cohort of Becoming an AI Engineer starts tomorrow, Saturday, May 16. This is a live, cohort-based course created in collaboration with best-selling author Ali Aminian and published by ByteByteGo.

Distributed systems are built out of services that need to communicate, and the simplest way to do that is for one service to call another directly and wait for a response.

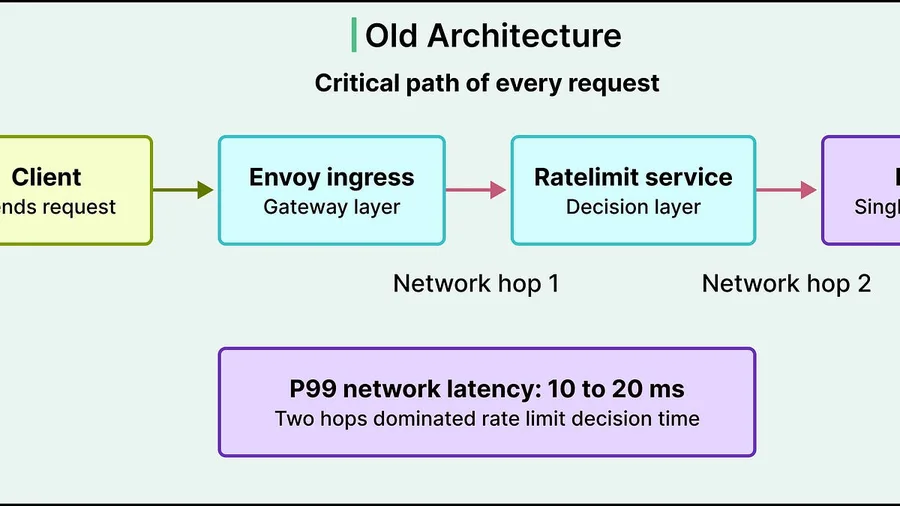

In this article, we look at how Databricks implemented rate limiting at scale, how they shrank the critical path, and the accuracy tradeoff that shrinking usually requires.

In this article, we will learn what happened as Figma grew and how its engineering team handled the growth in terms of the data pipeline issues.

research.io

research.ioSign up to keep scrolling

Create your feed subscriptions, save articles, keep scrolling.