Microsoft Research

Vega turns a full credential into a single proof, sharing only what is needed and nothing more, with performance that works in real apps. The post Vega: Zero-knowledge proofs for digital identity in the age of AI appeared first on Microsoft Research .

Our recent paper, “LLMs Corrupt Your Documents When You Delegate”, has generated discussion about the reliability of AI systems in delegated workflows. We appreciate the interest in this work and want to clarify several important points about what the paper does—and does not—claim. The research aims to develop robust evaluation methods for long-horizon delegated and […] The post Further Notes on …

mimalloc is an open-source, modern, scalable memory allocator that is a drop-in replacement for malloc and free. It is relatively small (~12K lines), with clear internal data structures, and is easy to build and integrate into other projects. It provides bounded worst-case allocation times (up to OS primitives), bounded space overhead, low internal fragmentation, and minimal contention by relying…

Introducing GridSFM, a small foundation model that can predict AC optimal power flow in milliseconds, boosting efficiency and unlocking cost savings. Learn how GridSFM gives grid operators direct visibility into congestion, stability, and system health. The post GridSFM: A new, small foundation model for the electric grid appeared first on Microsoft Research .

MatterSim is expanding what AI can do for materials science—from faster large-scale simulations to MatterSim-MT, a new multi-task model for simulating properties beyond potential energy surfaces alone. The post Advancing AI for materials with MatterSim: experimental synthesis, faster simulation, and multi-task models appeared first on Microsoft Research .

Using SocialReasoning Bench, we observed a stable pattern across models—agents execute competently, but fail to consistently improve the user’s position, even with explicit instructions to optimize for user interest. The post SocialReasoning-Bench: Measuring whether AI agents act in users’ best interests appeared first on Microsoft Research .

Microsoft Research is excited to release an open dataset of approximate transmission topology of the U.S. power grid derived from publicly available data. The ability to study transmission-level power grid behavior is essential for modern power systems research. Analyses of congestion, transmission expansion, demand growth, and system resilience all depend on network models with realistic […] The…

Microsoft researchers share advances in building and operating large-scale distributed systems, spanning datacenters, networking, and the growing intersection with AI during NSDI ’26. The post Microsoft at NSDI 2026: Advances in large-scale networked systems appeared first on Microsoft Research .

Safe agents don’t guarantee a safe ecosystem of interconnected agents. Microsoft Research examines what breaks when AI agents interact and why network-level risks require new approaches. The post Red-teaming a network of agents: Understanding what breaks when AI agents interact at scale appeared first on Microsoft Research .

Deploying large language models (LLMs) in real-world, high-stakes settings is harder than it should be. In high-stakes settings like law, medicine, and cloud incident response, performance and reliability can quickly break down because adapting models to domain-specific requirements is a slow and manual process that is difficult to reproduce. The core challenge is domain adaptation, […] The post …

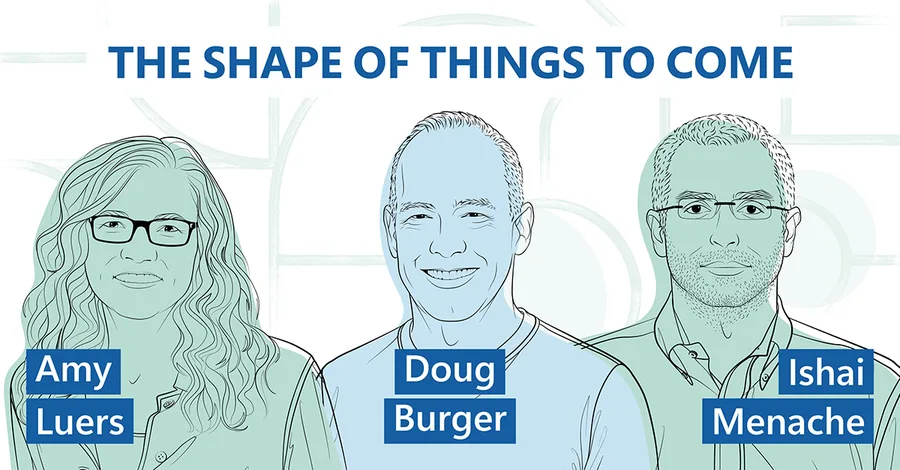

Doug Burger, sustainability expert Amy Luers, and optimization researcher Ishai Menache examine the global emissions implications of datacenter operations, efficiency gains, and AI's potential across electrification, materials, and food systems. The post Can we AI our way to a more sustainable world? appeared first on Microsoft Research .

For the past five years, the New Future of Work report has captured how work is changing. This year, the shift feels especially sharp. Previous editions have focused on technology’s role in increasing productivity by automating tasks, accelerating communication, and expanding access to information, as well as the rise of remote work. Today, generative AI […] The post New Future of Work: AI is dri…

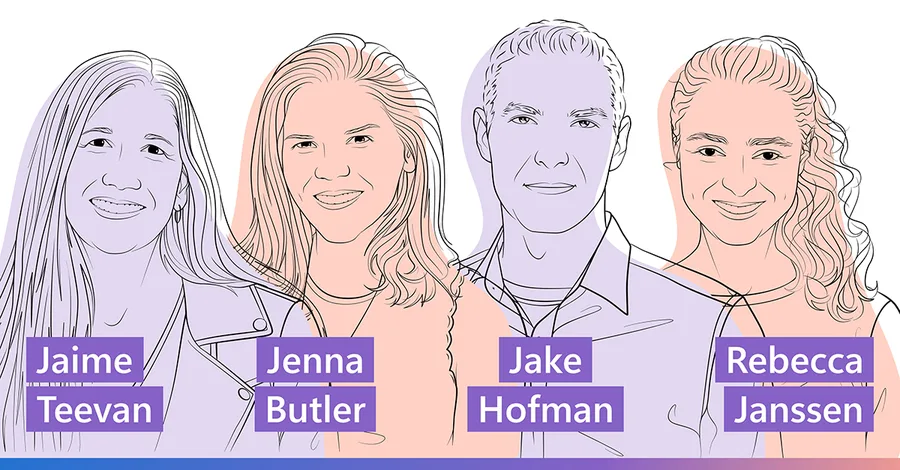

Microsoft Chief Scientist Jaime Teevan and researchers Jenna Butler, Jake Hofman, and Rebecca Janssen unpack the New Future of Work Report 2025 and explore the ideal AI-driven working world. Plus, is AI a tool or a collaborator? And why the answer matters. The post Ideas: Steering AI toward the work future we want appeared first on Microsoft Research .

At a glance - AI benchmarks report performance on specific tasks but provide limited insight into underlying capabilities; ADeLe evaluates models by scoring both tasks and models across 18 core abilities, enabling direct comparison between task demands and model capabilities. - Using these ability scores, the method predicts performance on new tasks with ~88% accuracy, including for models such a…

At a glance - To successfully complete tasks, embodied AI agents must ground and update their plans based on visual feedback. - AsgardBench isolates whether agents can use visual observations to revise their plans as tasks unfold. - Spanning 108 controlled task instances across 12 task types, the benchmark requires agents to adapt their plans based on what they observe. - Because objects can be i…

At a glance - VLM-based robot planners struggle with long, complex tasks because natural-language plans can be ambiguous, especially when specifying both actions and locations. - GroundedPlanBench evaluates whether models can plan actions and determine where they should occur across diverse, real-world robot scenarios. - Video-to-Spatially Grounded Planning (V2GP) is a framework that converts rob…

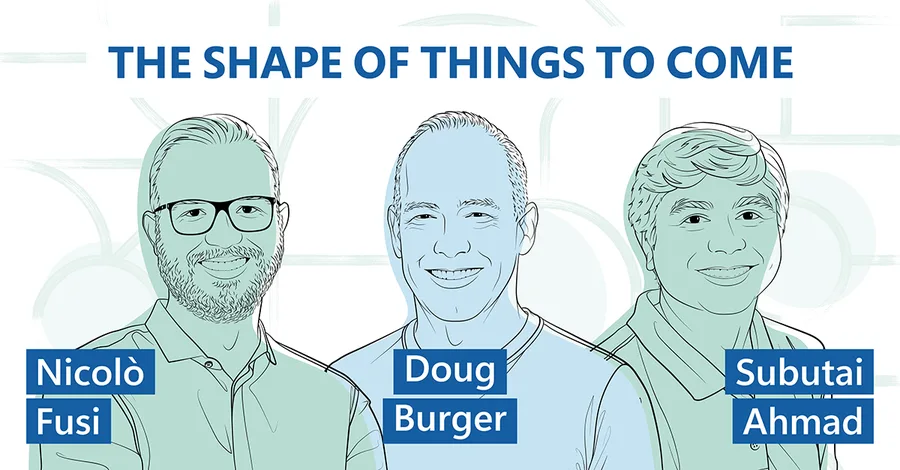

Technical advances are moving at such a rapid pace that it can be challenging to define the tomorrow we’re working toward. In The Shape of Things to Come, Microsoft Research leader Doug Burger and experts from across disciplines tease out the thorniest AI issues facing technologists, policymakers, business decision-makers, and other stakeholders today. The goal: to amplify the shared understandin…

At a glance - Problem: Debugging AI agent failures is hard because trajectories are long, stochastic, and often multi-agent, so the true root cause gets buried. - Solution: AgentRx (opens in new tab) pinpoints the first unrecoverable (“critical failure”) step by synthesizing guarded, executable constraints from tool schemas and domain policies, then logging evidence-backed violations step-by-step…

At a glance - Today’s AI agents store long interaction histories but struggle to reuse them effectively. - Raw memory retrieval can overwhelm agents with lengthy, low-value context. - PlugMem transforms interaction history into structured, reusable knowledge. - A single, general-purpose memory module improves performance across diverse agent benchmarks while using fewer memory tokens. It seems co…

research.io

research.ioSign up to keep scrolling

Create your feed subscriptions, save articles, keep scrolling.