DigitalOcean Blog

Most teams building on LLMs today make a single model decision and apply it uniformly across every request. They reach for a frontier model not because every task demands it, but because building the infrastructure to do anything smarter is hard, time-consuming, and easy to get wrong. When the tooling isn’t there, the path of least resistance is to use a single model, even if it means that you en…

Everyone calling an LLM API has access to the same models. So what actually sets technical teams apart? It’s everything around the model like the routing logic, the live data pipelines, and the ability to scale from prototype to production without ever rewriting your code. Which LLM tops a benchmark matters less than what becomes possible when infrastructure stops being an afterthought, when one …

I’ve spent the last fifteen years building cloud services: early days of AWS building S3 and EBS, helping launch Oracle Cloud Infrastructure from inception, and now building the agentic cloud at DigitalOcean for AI-natives. Every cloud I’ve worked on was designed for the workloads of its era. Those clouds were built for human-centric SaaS applications: a few users, a handful of requests per sessi…

Welcome to What’s New on the DigitalOcean Inference Engine —your weekly roundup of the latest inference updates at DigitalOcean. Week of April 27 OpenAI’s GPT-5.5 is now available across DigitalOcean’s inference cloud products bringing a new level of autonomous, agent-like intelligence to production AI workflows. Designed to go beyond single prompt responses, GPT-5.5 can plan, reason, and execute…

The AI industry has a compounding bottleneck, and it isn’t the models. It’s inference. What used to be a single model call has become a system of continuous interaction. Applications now orchestrate multiple models, retrieve and synthesize data, execute tools, and repeat this cycle in production. These are no longer stateless requests. They are dynamic systems that behave more like infrastructure…

Today at Deploy, we are announcing the general availability of DeepSeek V3.2, MiniMax-M2.5, and Qwen 3.5 397B on DigitalOcean Serverless Inference. On DeepSeek V3.2 and Qwen 3.5 397B, we deliver #1 output speed across all providers Artificial Analysis tested . On DeepSeek V3.2 specifically, that translates to 230 output tokens per second and sub-1-second Time-to-First-Token (TTFT) for 10,000 inpu…

Getting a model to answer 10 inference requests concurrently is tricky but simple enough; getting it to handle 2,000 engineers hitting a coding assistant with long contexts, all day, without runaway costs, is where teams stall. A working endpoint is only the beginning. Teams need to identify the supporting hardware and wire up the right components—serving, scaling, observability, and cost guardra…

In large-scale cloud environments, unpredictable hypervisor crashes carry real operational cost. While traditional reactive monitoring that relies on static thresholds and post-hoc alerts were once the industry standard, this monitoring misses the non-linear, stochastic signals that precede hardware failure. In an era where high availability is the norm, the transition from reactive observation …

Our journey to truly understand our customer experience began with a hard look at our internal availability numbers at the start of 2025. We saw something uncomfortable: the numbers didn’t match our customers’ reality. Our monthly availability oscillated between 99.5% and 99.9%. Those peaks and valleys depended more on whether we declared a high-severity incident that month than on how the platf…

We know how to scale traditional web services: throw a load balancer in front of stateless microservices and horizontally scale your CPU instances as traffic grows. Large Language Models break this playbook because LLM inference is fundamentally stateful, bottlenecked by memory bandwidth rather than raw compute, and bound to physical hardware interconnects. Scaling LLM inference isn’t just a matt…

We have moved past the point where a 70GB model was considered “heavy.” With the rise of models like DeepSeek-V3 , the GLM series, and other massive Mixture-of-Experts (MoE) architectures, the industry is now grappling with weights exceeding 700GB in optimized formats—and well over 1.2TB in full precision. And parameters keep climbing— Epoch’s AI data tracks frontier models now reaching into the …

As AI moves from experimental chat interfaces to production-grade agents, the need for a foundational memory layer to transform these AI-powered tasks into stateful models is apparent. The absence of a robust memory layer causes agents to lose vital statefulness, leading to: Inability to maintain long-term recall. Without persistent memory to track context across sessions, an agent might recogniz…

Load balancing for LLMs is fundamentally different from load balancing for traditional services like web servers, APIs, or databases. Prompt caching is the reason. Prompt caching typically cuts input token costs by 50-90% and can reduce Time to First Token (TTFT) latency by up to 80%, but those gains assume your request lands on the replica that already has the relevant prefix cached. Under naive…

At DigitalOcean, documentation has always been a priority. Developers come to our docs to get unstuck, and the faster they find what they need, the better. Traditional docs pages work, but they require users to know which page to visit, scan for the relevant section, and map generic instructions to their specific setup. That process takes minutes (or longer) when it could take seconds. So we buil…

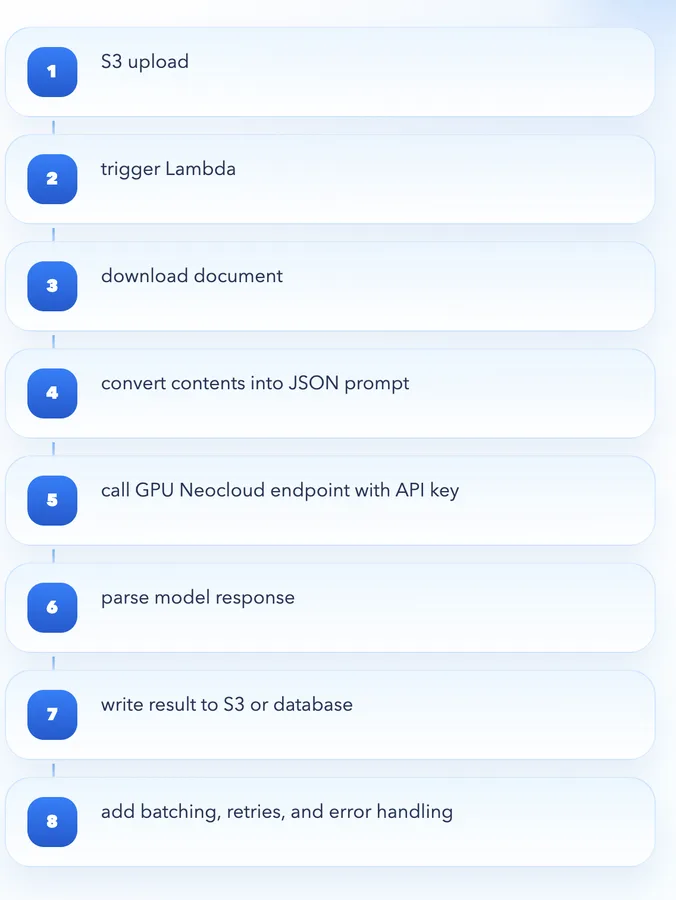

The cloud AI platform ecosystem today looks more powerful than ever, with access to powerful GPUs like NVIDIA H100 and H200, massive libraries of pre-trained models, and full pipelines for fine-tuning and inference. I recently tried deploying a simple inference endpoint for a model. Ideally, it should have taken a few minutes: provision compute load the model send a request Instead, it took clo…

AI is now central to modern software development. Teams across industries are turning to AI to solve product and workflow problems in software. But building production systems is still complex. The hardest part of deploying AI isn’t the model, it’s everything around it. That complexity becomes a glue-code problem when storage, compute, orchestration, networking, authentication, and inference live…

At DigitalOcean, we have been vocal about our strategic shift: we are building the world’s premier Agentic Inference Cloud. Our mission is to provide the foundation where AI-native enterprises build and run production inference at scale. Today, I am thrilled to announce a significant step in that journey: we have acquired Katanemo Labs, Inc. , a leader in agentic AI infrastructure. By integrating…

Today, we’re announcing that Arcee AI ’s Trinity Large-Thinking is now available in Public Preview on DigitalOcean’s Agentic Inference Cloud, giving developers the ability to run frontier-class reasoning workloads without managing infrastructure or stitching together complex systems. DigitalOcean is proud to partner with Arcee to bring Trinity Large-Thinking to AI builders, available via Serverle…

research.io

research.ioSign up to keep scrolling

Create your feed subscriptions, save articles, keep scrolling.