linear-algebra

Let $A=[a_{ij}]\in\mathbb{R}^{n\times n}$ be a real symmetric positive definite matrix, and let $b\in\mathbb{R}^n$ be a given vector. Assume that no eigenvector of $A$ is orthogonal to $b$, i.e., for ...

It's been a while since I touched on theory of canonical forms in Linear Algebra. I was trying to do a proof of existence of Jordan canonical form on my own without referring to modules or structure ...

Assuming finite-dimensional vector spaces, I want to prove that if $V$ is a real vector space and its dimension is an even number, then the operator $-I$ on $V$ has a square root. Say that $dimV$ $=$ ...

I'm trying to work out the principal directions of curvature of a 3D parametric surface, but when I ask Wolfram Engine to find them by calculating the eigenvectors of the shape operator, it just says ...

Ten posts. Vectors. Matrices. Dot products. Matrix multiplication. Derivatives. Gradient descent. Statistics. Probability. Normal distributions. Every single concept explained. Zero of them actually running together in one place. Until now. This post is different from every other in Phase 2. No new concepts. No theory. Just code. Everything you learned over the last ten posts wired together in Nu…

William & Mary Ferguson Professor of Mathematics Chi-Kwong Li was recently honored with two internationally acclaimed awards for his exceptional contributions to mathematics. He received the 2025 Hans Schneider Prize in Linear Algebra from the International Linear Algebra Society (ILAS) and the 2025 Béla Szőkefalvi-Nagy Medal from the Bolyai Institute of the University of Szeged, Hungary. These a…

Since the way we manipulate high-dimensional vectors is primarily matrix multiplication, it isn’t a stretch to say it is the bedrock of the modern AI revolution. The post A Bird’s-Eye View of Linear Algebra: Why Is Matrix Multiplication Like That? appeared first on Towards Data Science .

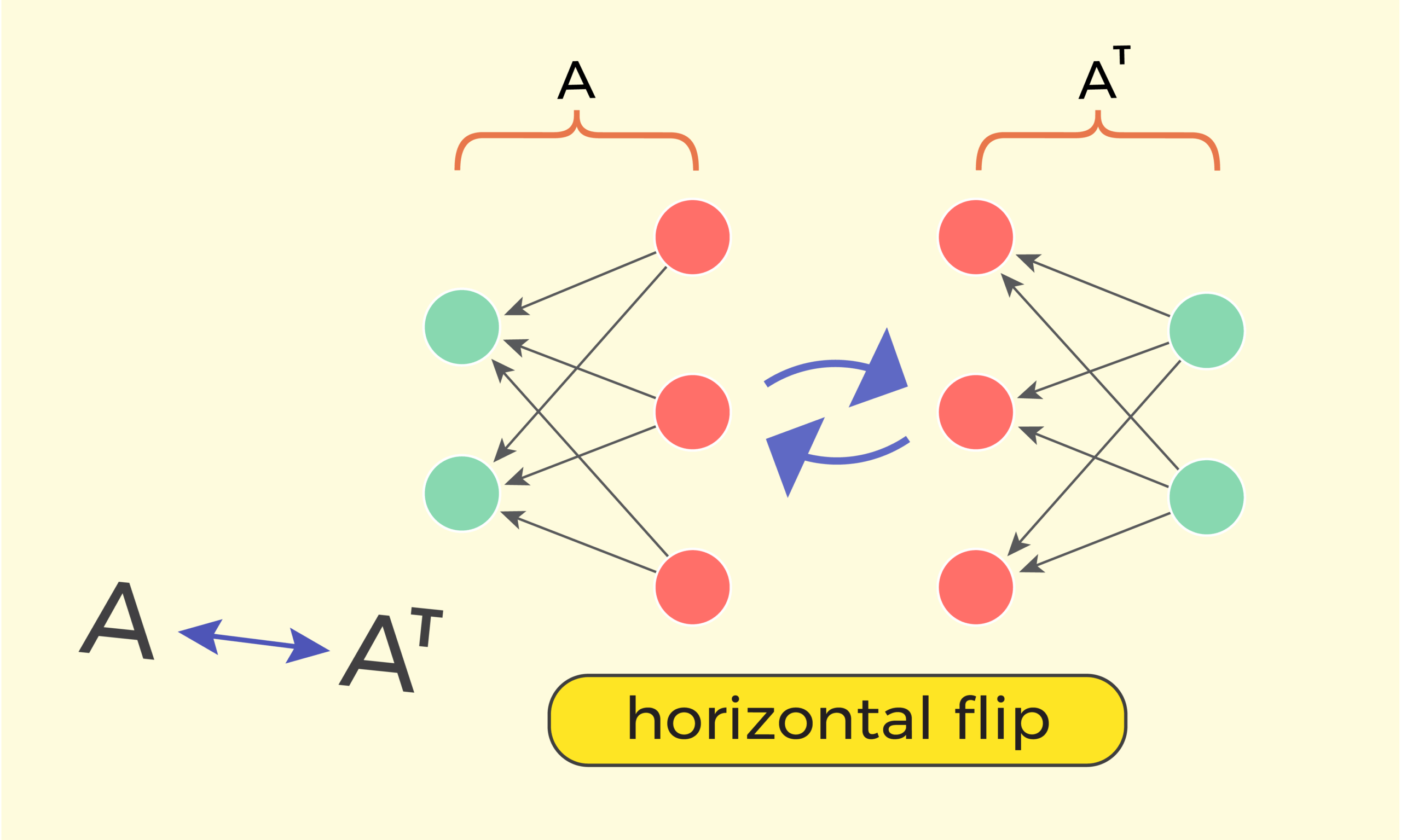

Visualizing matrix transposition, to make sense of transpose-related formulas. The post Understanding Matrices | Part 3: Matrix Transpose appeared first on Towards Data Science .

Linear algebra isn’t just for math class. It has many real-world uses. You can find the applications of linear algebra in computer graphics, data analysis, […] The post Get to Know the Applications of Linear Algebra appeared first on Blog - Great Assignment Help .

The transpose of a matrix turns the matrix sideways. Suppose A is an m × n matrix with real number entries. Then the transpose Aᵀ is an n × m matrix, and the (i, j) element of A is the (j, i) element of Aᵀ. Very concrete. The adjoint of a linear operator is a more abstract concept, though […] The post Transpose and Adjoint first appeared on John D. Cook .

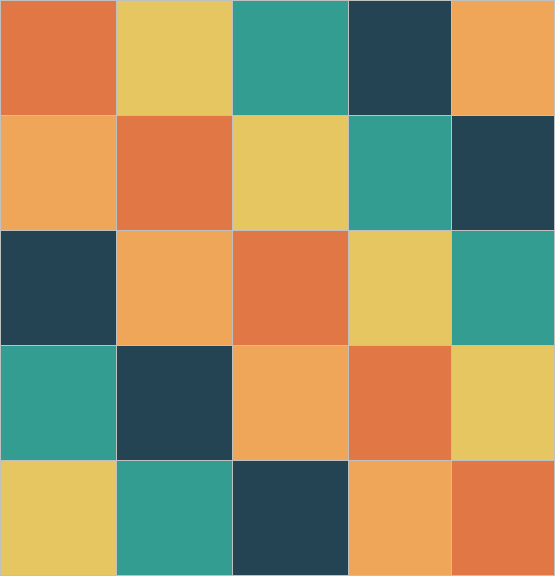

AI algorithms are in the air. The success of those algorithms is largely attributed to dimension expansions, which makes it important for us to consider that aspect. Matrix multiplication can be beneficially perceived as a way to expand the dimension. We begin with a brief discussion on PCA. Since PCA is predominantly used for reducing...

Given a square complex matrix A, the Jordan normal form of A is a matrix J such that and J has a particular form. The eigenvalues of A are along the diagonal of J, and the elements above the diagonal are 0s or 1s. There’s a particular pattern to the 1s, giving the matrix J […] The post Jordan normal form: 1’s above or below diagonal? first appeared on John D. Cook .

When is the discrete Fourier transform of a vector proportional to the original vector? And when that happens, what is the proportionality constant? In more formal language, what can we say about the eigenvectors and eigenvalues of the DFT matrix? Setup I mentioned in the previous post that Mathematica’s default convention for defining the DFT […] The post Eigenvectors of the DFT matrix first app…

A few days ago I wrote that circulant matrices all have the same eigenvectors. This post will show that it follows that circulant matrices commute with each other. Recall that a circulant matrix is a square matrix in which the rows are cyclic permutations of each other. If we number the rows from 0, then […] The post Circulant matrices commute first appeared on John D. Cook .

This post will look at rotating a matrix 90° and what that does to the determinant. This post was motivated by the previous post. There I quoted a paper that had a determinant with 1s in the right column. I debated rotating the matrix so that the 1s would be along the top because that […] The post What does rotating a matrix do to its determinant? first appeared on John D. Cook .

One of the surprising things about linear algebra over a finite field is that a non-zero vector can be orthogonal to itself. When you take the inner product of a real vector with itself, you get a sum of squares of real numbers. If any element in the sum is positive, the whole sum is […] The post Self-orthogonal vectors and coding first appeared on John D. Cook .

Apoorva Khare and I have just uploaded to the arXiv our paper “On the sign patterns of entrywise positivity preservers in fixed dimension“. This paper explores the relationship between positive definiteness of Hermitian matrices, and entrywise operations on these matrices. The starting point for this theory is the Schur product theorem, which asserts that if […]

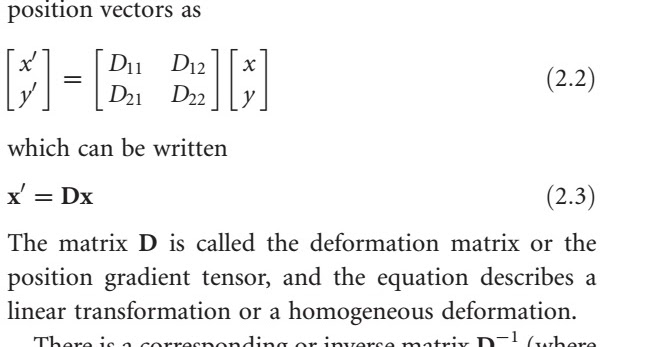

Mathematical description of deformation Deformation is conveniently and accurately described and modelled by means of elementary linear algebra. Let us use a local coordinate system, such as one attached to a shear zone, to look at some fundamental deformation types. We will think in terms of particle positions (or vectors) and see how particles change positions during deformation. If (x, y) is t…

In the previous blogs (Part 1, Part 2, Part 3, Part 4), we clarified the difference and similarities between diagonally dominant matrices, weakly diagonal dominant matrices, strongly diagonally dominant matrices, and irreducibly diagonally dominant matrices. In this blog, we enumerate what implications these classifications have. _____________________________ If a square matrix is strictly diago…

research.io

research.ioSign up to keep scrolling

Create your feed subscriptions, save articles, keep scrolling.