gpu

RTX 5080 Launched, Rust for CUDA, & LLM GPU Scheduling Deep Dive Today's Highlights This week's top GPU news highlights a new GeForce RTX 5080 variant, alongside advancements in GPU programming tools and deep dives into LLM optimization. Developers can now explore a Rust-to-PTX compiler for CUDA, while a new article sheds light on custom GPU scheduling for large language models. Palit Unveils GeF…

Chicago startup Newtonian Standard says it has identified a memory behavior in NVIDIA GPUs that could serve as the foundation for a new kind of hardware-level security. J.P. O’Donnell, the company’s founder and a former Okta engineer, says he spent 18 months characterizing the behavior across NVIDIA Turing, Lovelace and Blackwell GPU architectures. The company… The post A startup says it found hi…

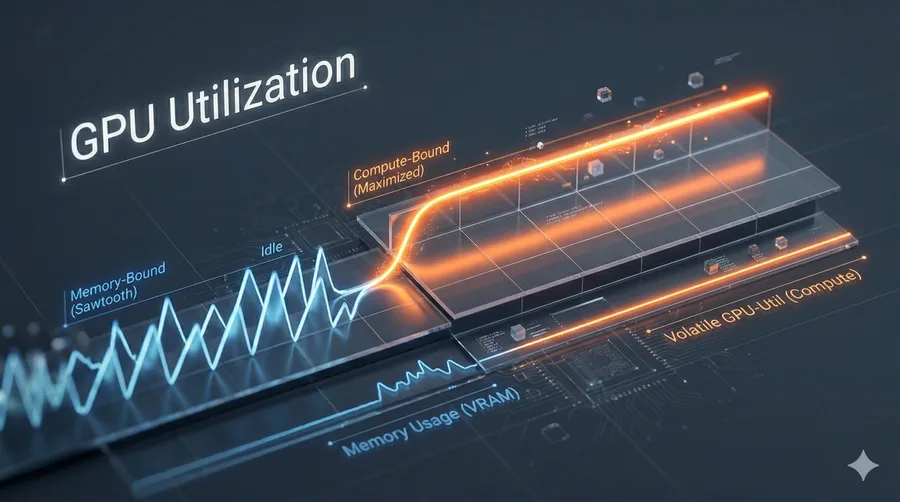

In an age of constrained compute, learn how to optimize GPU efficiency through understanding architecture, bottlenecks, and fixes ranging from simple PyTorch commands to custom kernels. The post A Guide to Understanding GPUs and Maximizing GPU Utilization appeared first on Towards Data Science .

A new technical paper, “SwarmIO: Towards 100 Million IOPS SSD Emulation for Next-generation GPU-centric Storage Systems,” was published by KAIST. Abstract “GPU-initiated I/O has emerged as a key mechanism for achieving high-throughput storage access by leveraging massive GPU thread-level parallelism, while recent industry trends point toward SSDs optimized for ultra-high random-read IOPS. Togethe…

The GPU market in 2025 has finally stabilized after years of volatility, but the complexity of choosing the right hardware has only increased. Between the rise of frame generation technologies, the increasing demand for VRAM in modern titles, and the divergent paths of NVIDIA and AMD, a simple price to performance calculation is no longer sufficient. This guide cuts through the marketing fluff to…

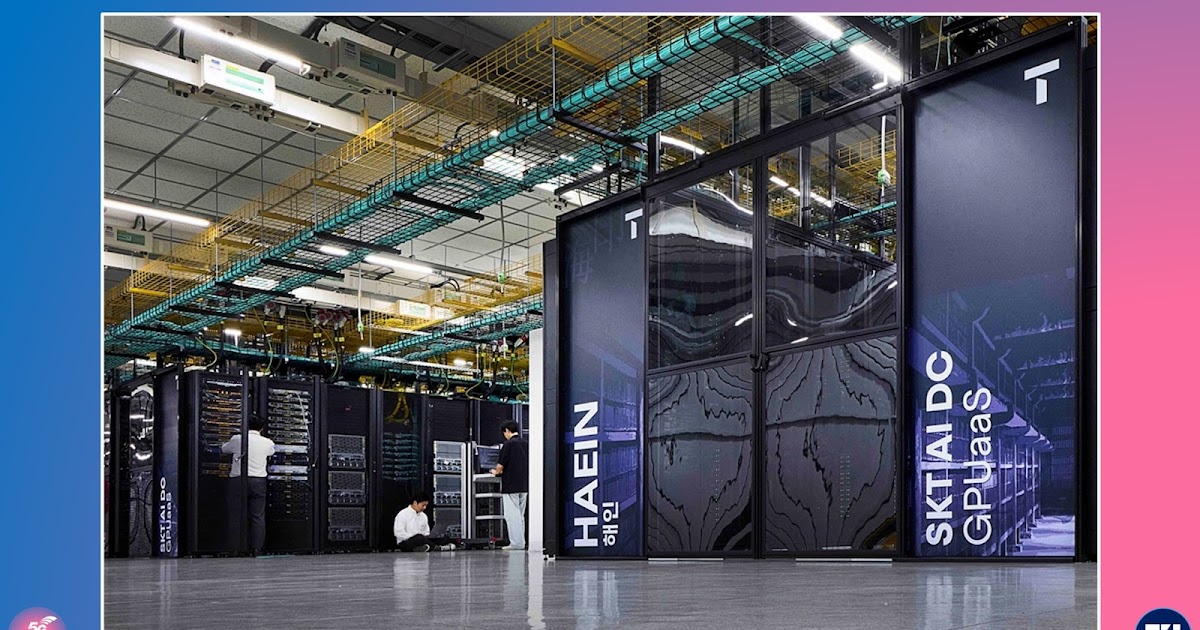

The growing demand for artificial intelligence computing is reshaping the role of telecommunications operators. As AI models become larger and more computationally intensive, the need for high performance infrastructure has moved into sharp focus. In response, SK Telecom is positioning itself not only as a connectivity provider but also as a key supplier of AI infrastructure through its GPU-as-a-…

Unlock Massive Token Throughput with GPU Fractioning in NVIDIA Run:ai Joint benchmarking with Nebius shows that fractional GPUs significantly improve throughput and utilization for production LLM workloads Feb 18, 2026 Joint benchmarking with Nebius shows that fractional GPUs significantly improve throughput and utilization for production LLM workloads

Topping the GPU MODE Kernel Leaderboard with NVIDIA cuda.compute The leaderboard scores how fast users’ custom GPU kernels solve a set of standard problems like vector addition, sorting, and matrix multiply. Feb 18, 2026 The leaderboard scores how fast users’ custom GPU kernels solve a set of standard problems like vector addition, sorting, and matrix multiply.

The NVIDIA accelerated computing platform is leading supercomputing benchmarks once dominated by CPUs, enabling AI, science, business and computing efficiency worldwide. Moore’s Law has run its course, and parallel processing is the way forward. With this evolution, NVIDIA GPU platforms are now uniquely positioned to deliver on the three scaling laws — pretraining, post-training and […]

AMD has rolled out ROCm 7.0.2, strengthening its open-source GPU compute platform with broader Linux support, refined AI capabilities, and reliability upgrades for high-performance data center GPUs. Released on October 10 by alexxu-amd on GitHub, the update extends ROCm’s reach to newer hardware and distributions while modernizing several of its core components. The new release […] The post AMD’s…

Key Takeaways NVIDIA HGX H200 is now available as a DigitalOcean GPU Droplet (virtual, on-demand machines). NVIDIA HGX H200 GPU Droplets offer significant performance improvements - up to 2x faster inference speeds and double the memory capacity - compared to the H100. NVIDIA H200 GPU Droplets are designed for simplicity, scalability, and cost-effectiveness, with on-demand pricing at just $3.44/G…

While specialized hardware certainly has its role, the future of computing must go beyond narrow optimizations and encompass a more holistic approach for a diverse range of applications. The post The Specialization Trap: Overcoming the Limitations of GPUs and AI Accelerators appeared first on Semiconductor Digest .

In an article published by the Wall Street Journal, which centers on Nvidia's pivotal role and success in the artificial intelligence (AI) sector, CSET's Hanna Dohmen shares her expertise on graphics processing units (GPUs). The post The Nvidia Chips Inside Powerful AI Supercomputers appeared first on Center for Security and Emerging Technology .